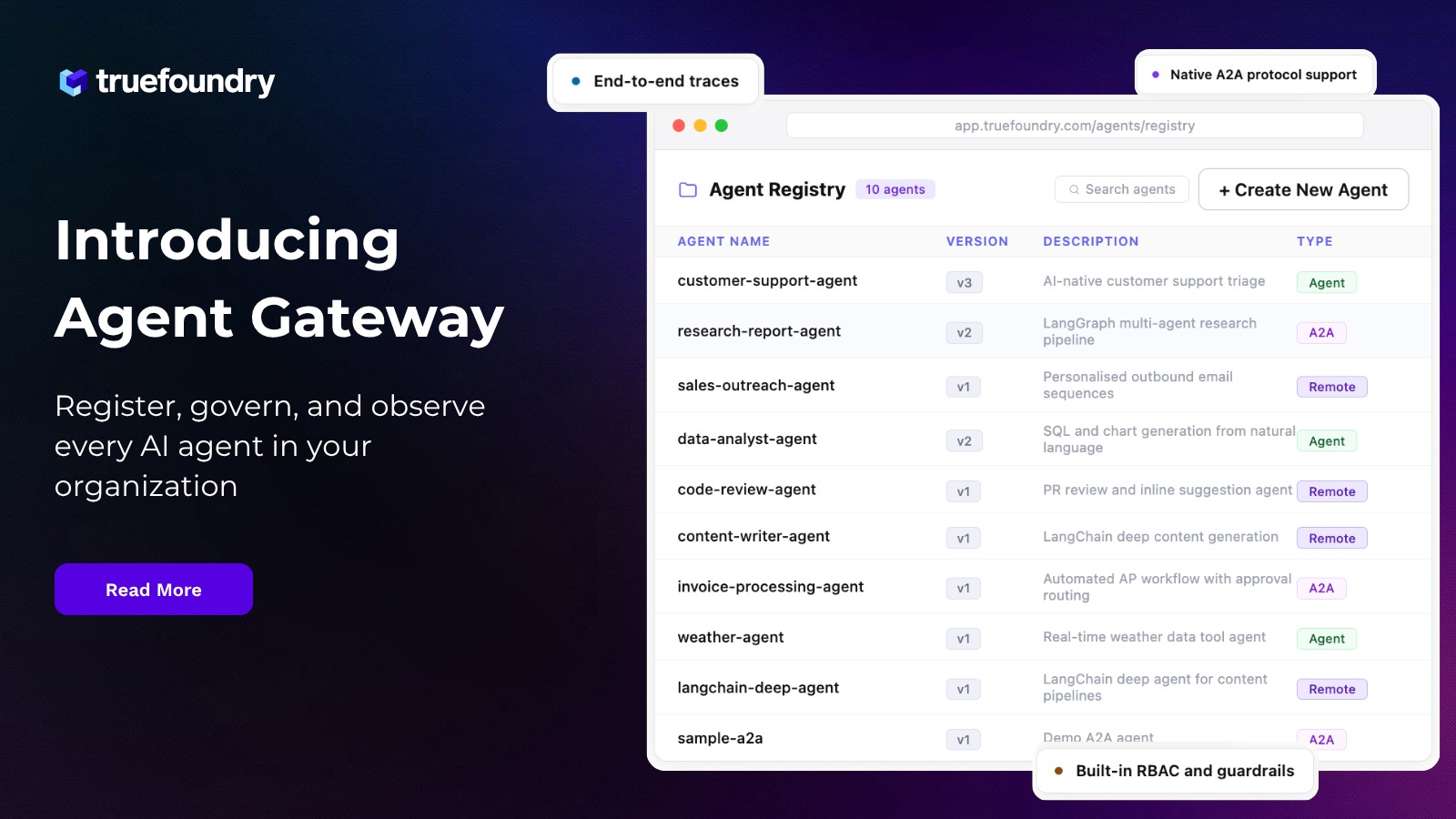

Deployment Platform Updates

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

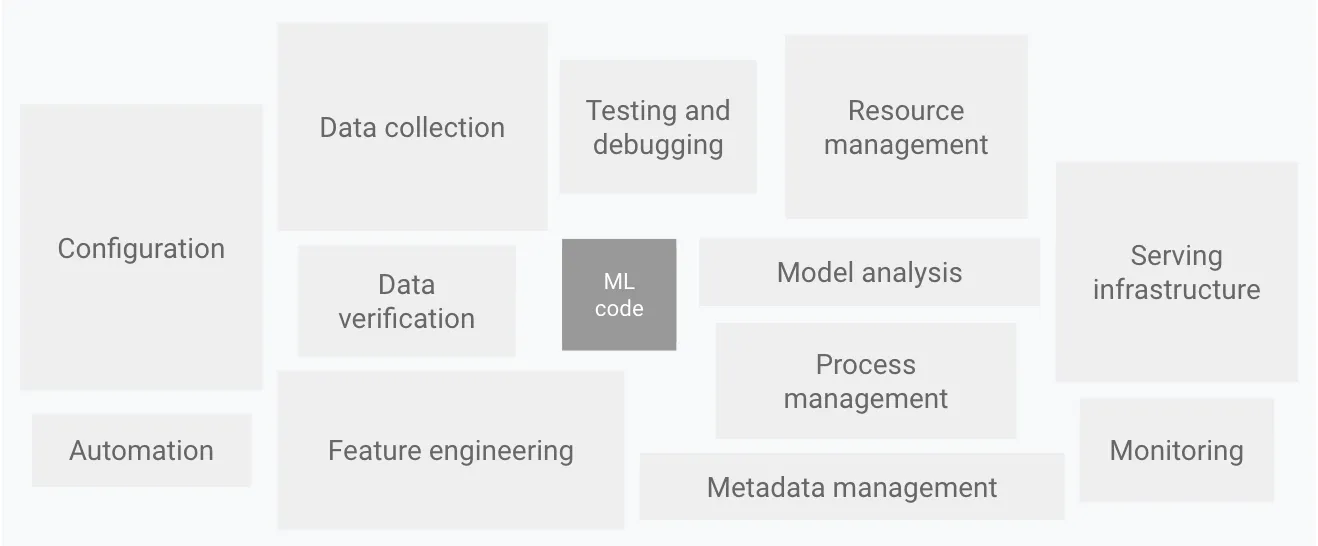

The Truefoundry team has been working really hard the last month adding features to our ML Deployment platform. Our goal here is to build a deployment platform that makes is absolutely easy to deploy ML models and services while enforcing the best engineering and security principles. To build a great ML platform, we need to have a solid engineering platform and that's why a lot of the initial focus has been on delivering a solid platform to deploy code.

Out of all the pieces of the Ml platform described above, we focus on the serving infrastructure, monitoring and all the automation around that.

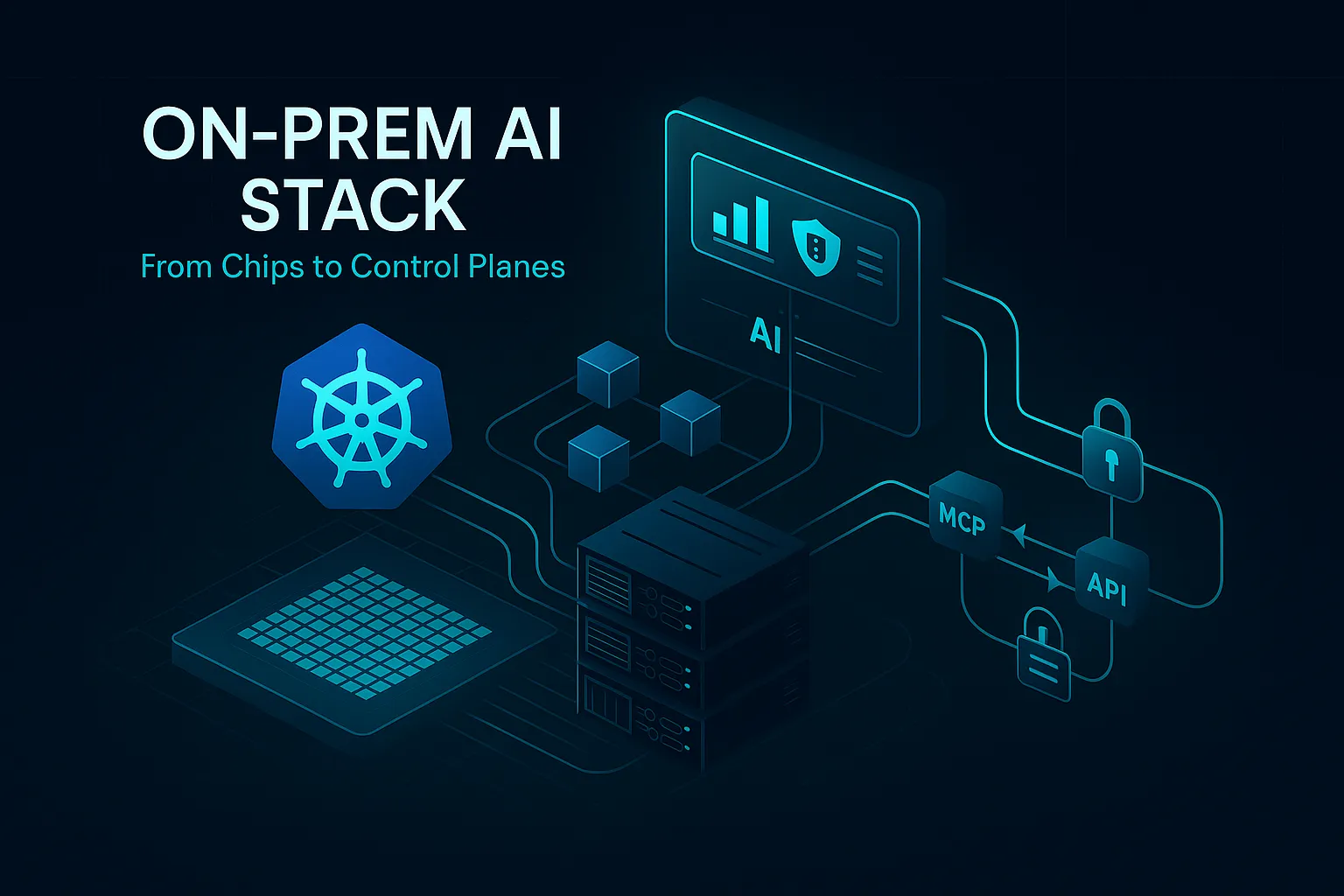

A lot of work went in building our deployment platform on top of Kubernetes. The goal here has been to make it absolutely easy to deploy in under 5 minutes wherein the platform takes care of building the image from the source code, storing it in a docker registry and then finally deploying the application on Kubernetes. A few of the updates from our last month include the following:

Ability to choose instance family while deploying

Machine learning models can have very different inference latency or performance based on the instance type. For e.g, when testing the inference latency of a hugging face model on Intel vs AMD processors, we found Intel processors to be around 30% faster. That’s why we now have an option to allow users to choose the instance type while deploying their workloads. If the instance type is not selected, the workload can be deployed on any available instance type.

Logs and Metrics for Deployments

We earlier had a Grafana link for showing logs and metrics. While Grafana was highly customizable, permission and access control wasn’t really possible on Grafana. Also, it turned out to be a bit slow and difficult to understand for users who weren’t used to Grafana. That’s why we implemented our own UI for showing logs and metrics which should suffice in most cases. We still offer the Grafana integration in public cloud for more advanced users.

Permission Control On Secret Groups

We can now add users as editor, viewer or admin on secret groups.

Github and Bitbucket integration

We can now deploy directly to Truefoundry from any Github or bitbucket repositories. Users can integrate with their own private repositories using the Oauth Flow and select the appropriate parameters to deploy the application.

In the next month, we are working on a few exciting features like:

- Making the platform more intuitive and easy to use.

- Automated deployment of truefoundry stack on any Kubernetes cluster

- Support for teams

- Deployment rollback functionality

Stay tuned and let us know your feedback!

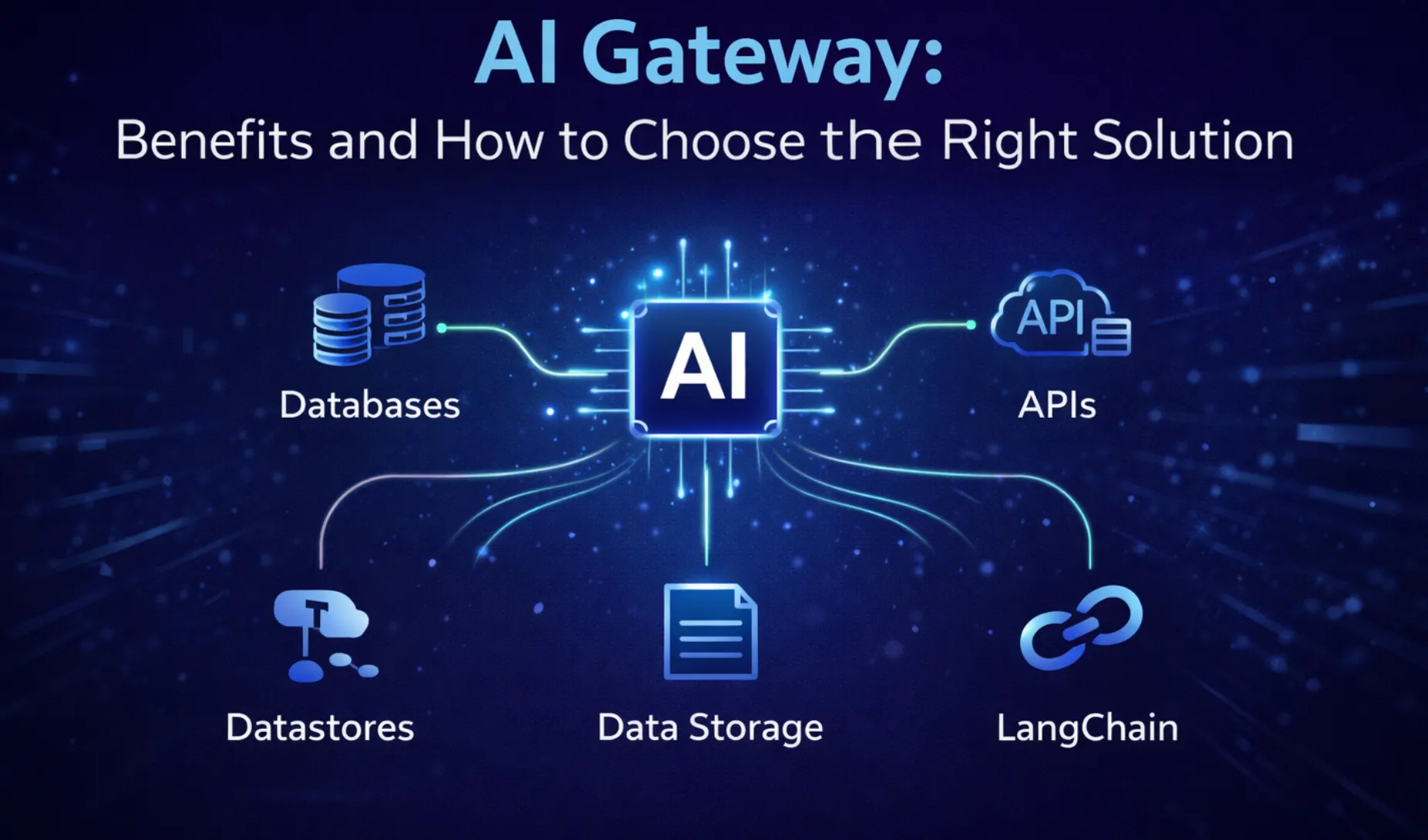

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

%20(28).webp)

.webp)

.webp)