Secure AI Gateway with Centralized MCP for Enterprises

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

Large‑language models can now reason, code and generate with breathtaking fluency. Yet they remain disconnected from the data, workflows and APIs that make your business unique. The result - Powerful models that still need humans to copy‑paste data between tools.

We have entered an age of agentic AI where LLM‑powered agents can autonomously call tools, trigger incidents or update CRMs. But to act safely and reliably, agents need a universal way to discover and invoke enterprise APIs.

That universal port is the Model Context Protocol (MCP). In this post we unpack MCP, why it matters for enterprises, and how TrueFoundry’s new AI Gateway with MCP support turns the vision into production reality.

We recently hosted an in-depth webinar on using Model Context Protocol (MCP) in enterprise settings.

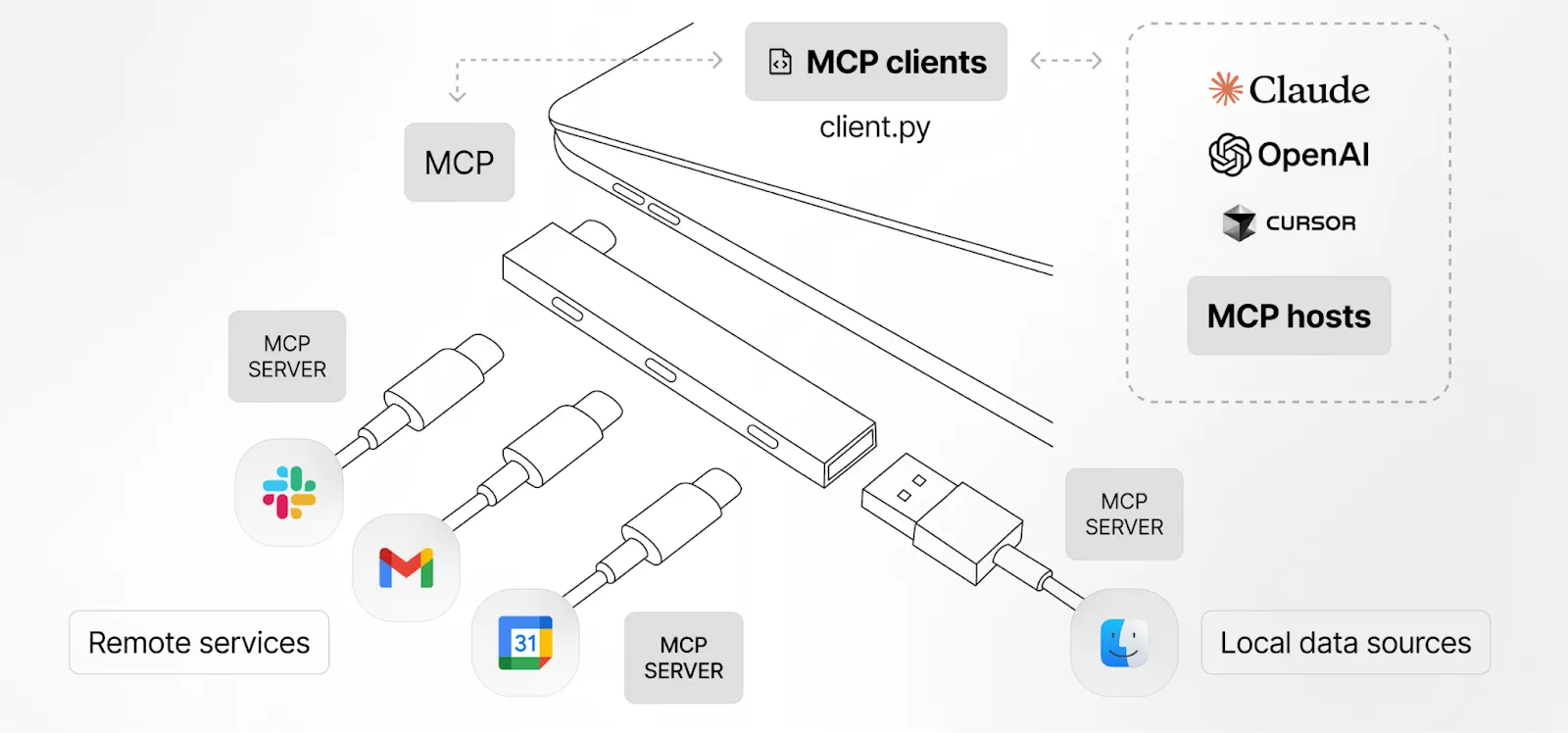

What is Model Context Protocol (MCP)?

MCP is an open standard that lets software expose its API surface in an LLM‑friendly schema—think of it as USB‑C for AI applications. An MCP server publishes tool definitions, authentication requirements and usage descriptions that an LLM can read at runtime.

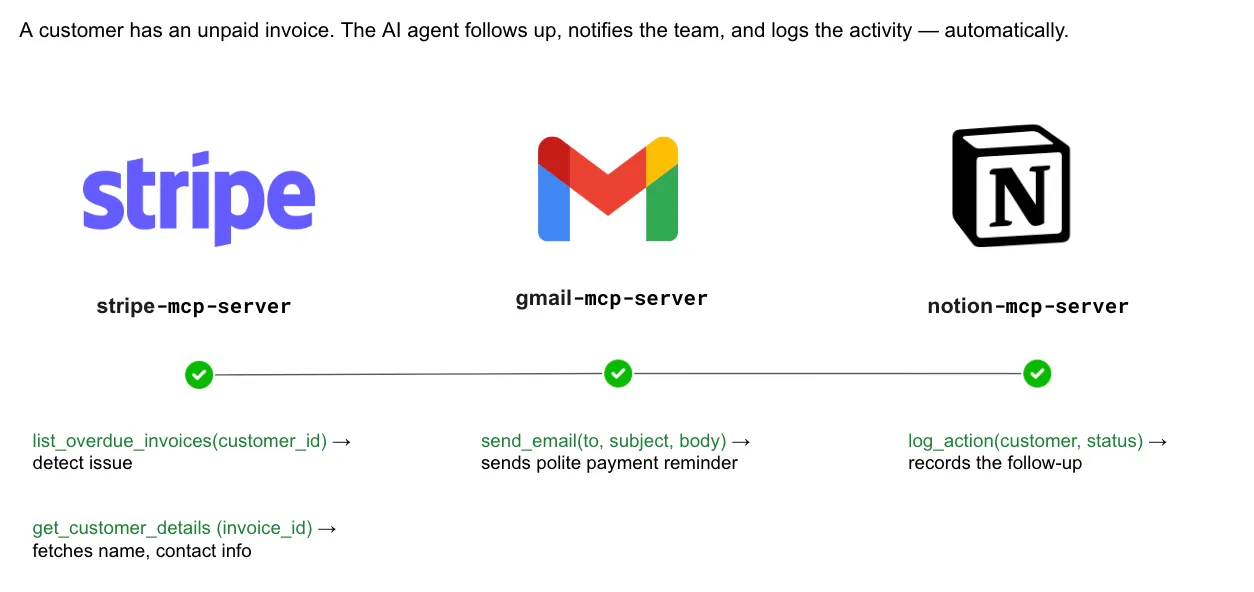

With MCP, you can build an invoice agent. When a customer’s invoice slips past its due date, the agent will use the following workflow:

- Stripe MCP: list_overdue_invoices to identify unpaid bills, then get_customer_details to pull the customer’s name and contact information.

- Gmail MCP: Crafts and sends a courteous reminder email via send_email.

- Notion MCP: Records the outreach with log_action, so finance and account teams have an up-to-date audit trail.

The entire workflow executes autonomously - detect, notify, and record - eliminating manual context-switching and data entry. Because every step is encapsulated in a reusable MCP call, the same architecture extends seamlessly to more finance operations, from subscription renewals to vendor disbursements, providing a consistent automation layer.

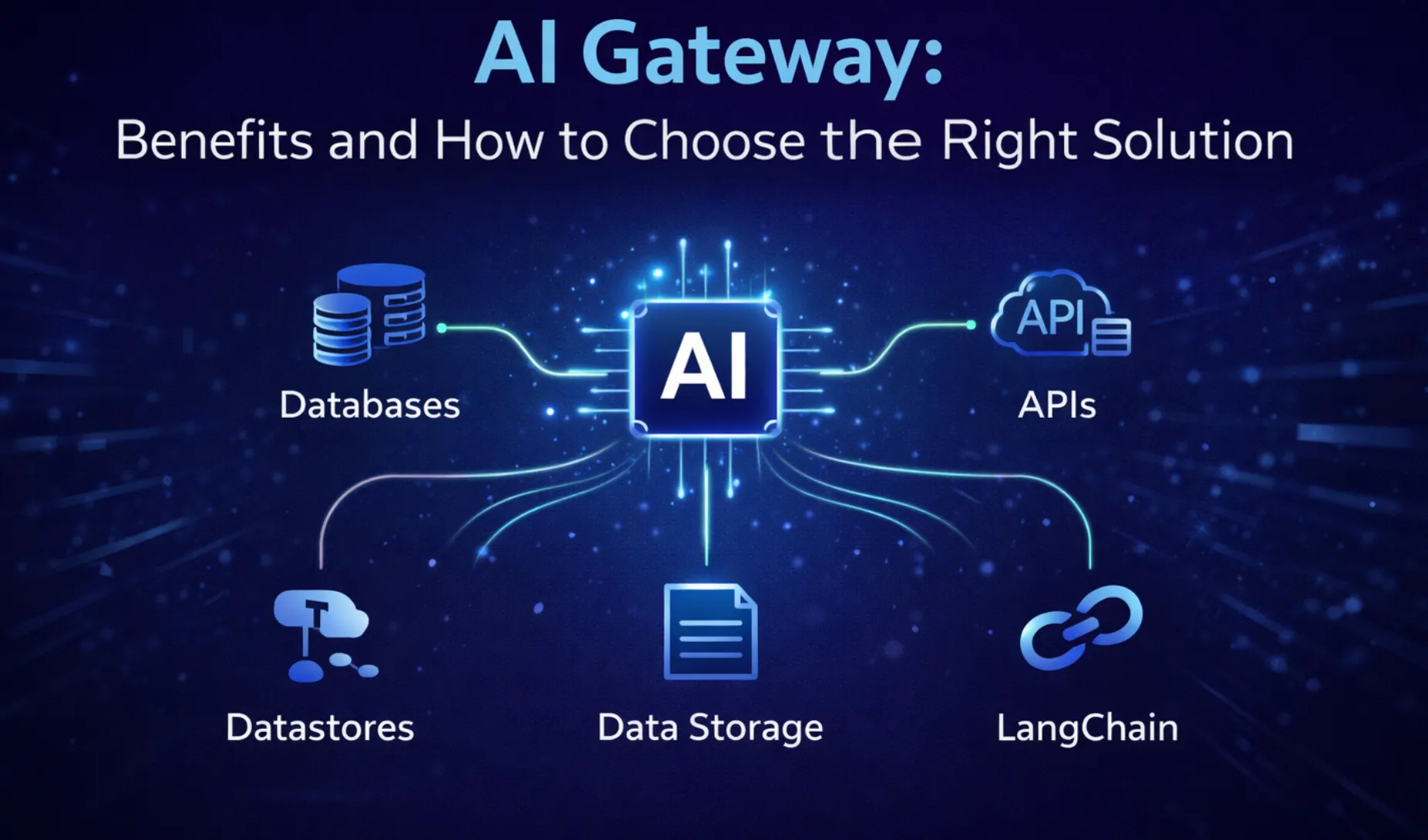

What are the Benefits of a Secure AI Gateway with MCP?

As organizations increasingly integrate AI into daily operations, securing data and controlling AI interactions has become essential. A secure AI gateway acts as a protective layer that ensures safe, compliant, and efficient AI usage.

- Enhanced data protection: Safeguards sensitive information by encrypting data and preventing unauthorized access during AI processing.

- Centralized security control: Acts as a single checkpoint to monitor, filter, and manage all AI-related requests and responses.

- Compliance support: Helps organizations meet regulations like GDPR and data protection laws by controlling how data is used and stored.

- Threat prevention: Detects and blocks malicious inputs, prompt injections, and harmful outputs before they reach AI systems or users.

- Access management: Enables role-based permissions, ensuring only authorized users can interact with AI tools and data.

- Audit and logging: Maintains detailed logs of AI interactions for transparency, accountability, and security audits.

- Reduced risk of data leakage: Prevents confidential business information from being exposed through AI prompts or outputs.

- Improved performance monitoring: Tracks AI usage, response quality, and system performance to optimize operations.

- Cost control: Monitors API usage and prevents misuse, helping organizations manage AI expenses effectively.

- Scalable security: Provides consistent protection as AI usage grows across teams, applications, and locations.

Operational Challenges with MCP at Scale

Deploying one demo server is easy but rolling MCP out organization wide is not. Common hurdles include:

- Hosting & lifecycle – Custom servers must run close to sensitive data, sometimes air‑gapped.

- Central discovery – Developers need a registry of approved MCP servers.

- Access Control - Define which teams / users / applications can access which MCP servers or tools registry

- Authentication & fine‑grained authorization – Need to make sure that the user calling the MCP server can only access the data that they have access to

- Guardrails – Destructive tools (e.g.DELETE_CUSTOMER) should require human approval.

- Observability & Cost controls – Without tracing, runaway agents can spam APIs or rack up bills.

MCP-Specific Governance and Control

As organizations adopt Model Context Protocol (MCP) to connect AI models with tools, APIs, and enterprise data, strong governance is essential to ensure secure, compliant, and well-managed interactions. MCP-specific controls help prevent unauthorized access, protect sensitive information, and maintain operational integrity while enabling AI innovation.

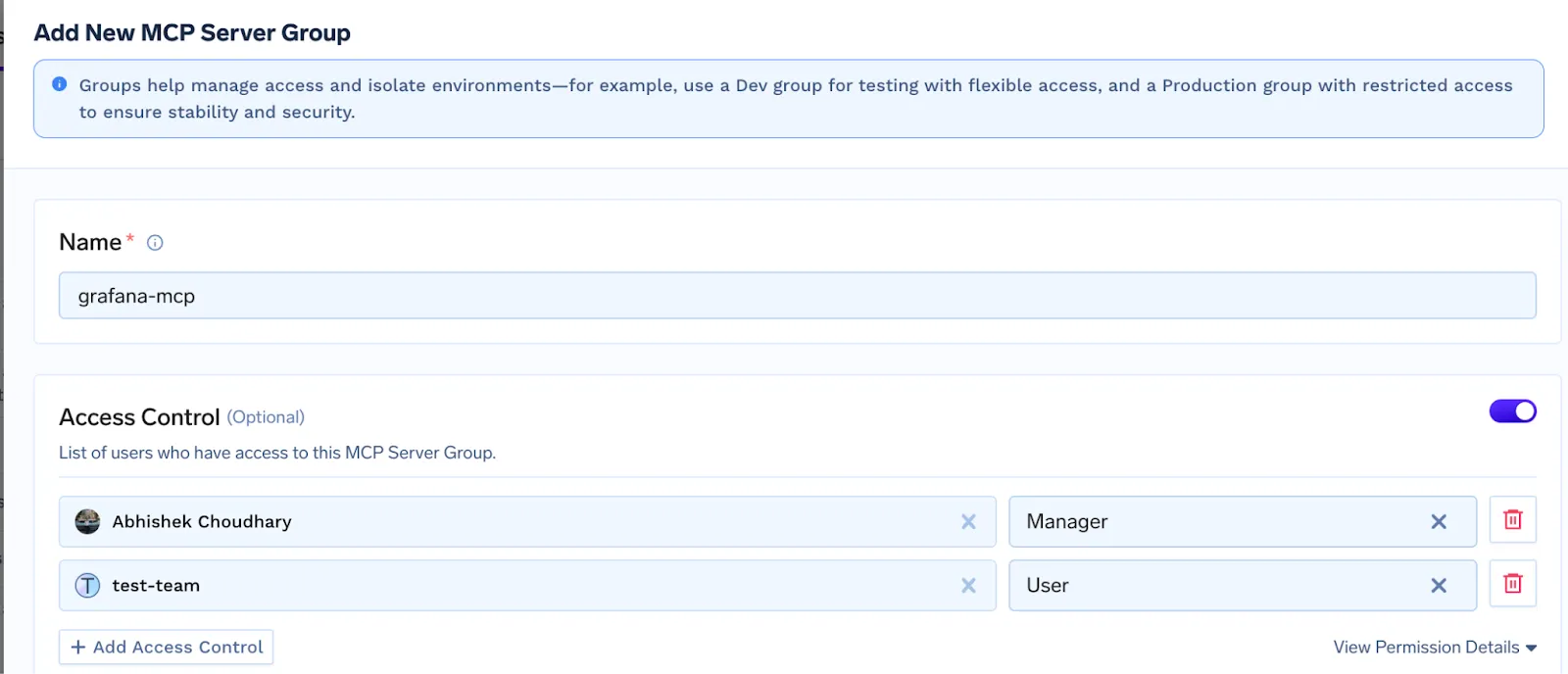

Centralized policy enforcement allows organizations to define clear rules for how AI systems access tools and data. Combined with granular access control, administrators can restrict permissions based on roles, users, or specific models, reducing misuse risks. Context-level permissions further strengthen security by ensuring only the necessary data is shared, supporting data minimization and privacy protection.

Audit trails and real-time monitoring provide transparency by tracking tool usage, data access, and unusual activity. These insights help teams detect threats early and maintain accountability. Version control and configuration management also play a key role by preventing misconfigurations and ensuring MCP integrations remain secure and consistent.

Finally, MCP governance supports compliance with regulatory requirements and enables rapid incident response. If suspicious activity occurs, affected MCP endpoints can be isolated quickly. With the right governance framework, organizations can scale MCP adoption confidently while safeguarding data, systems, and users.

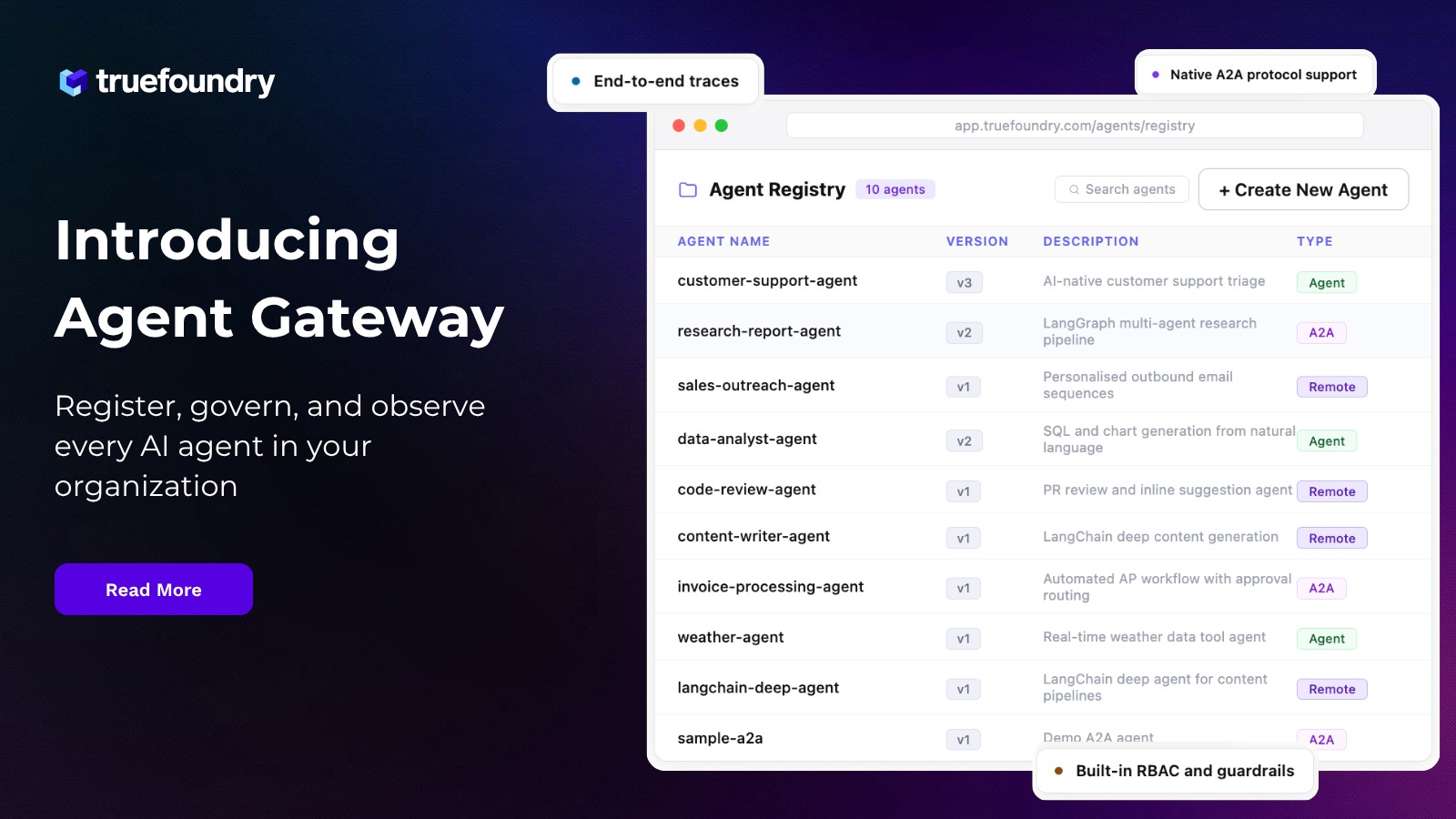

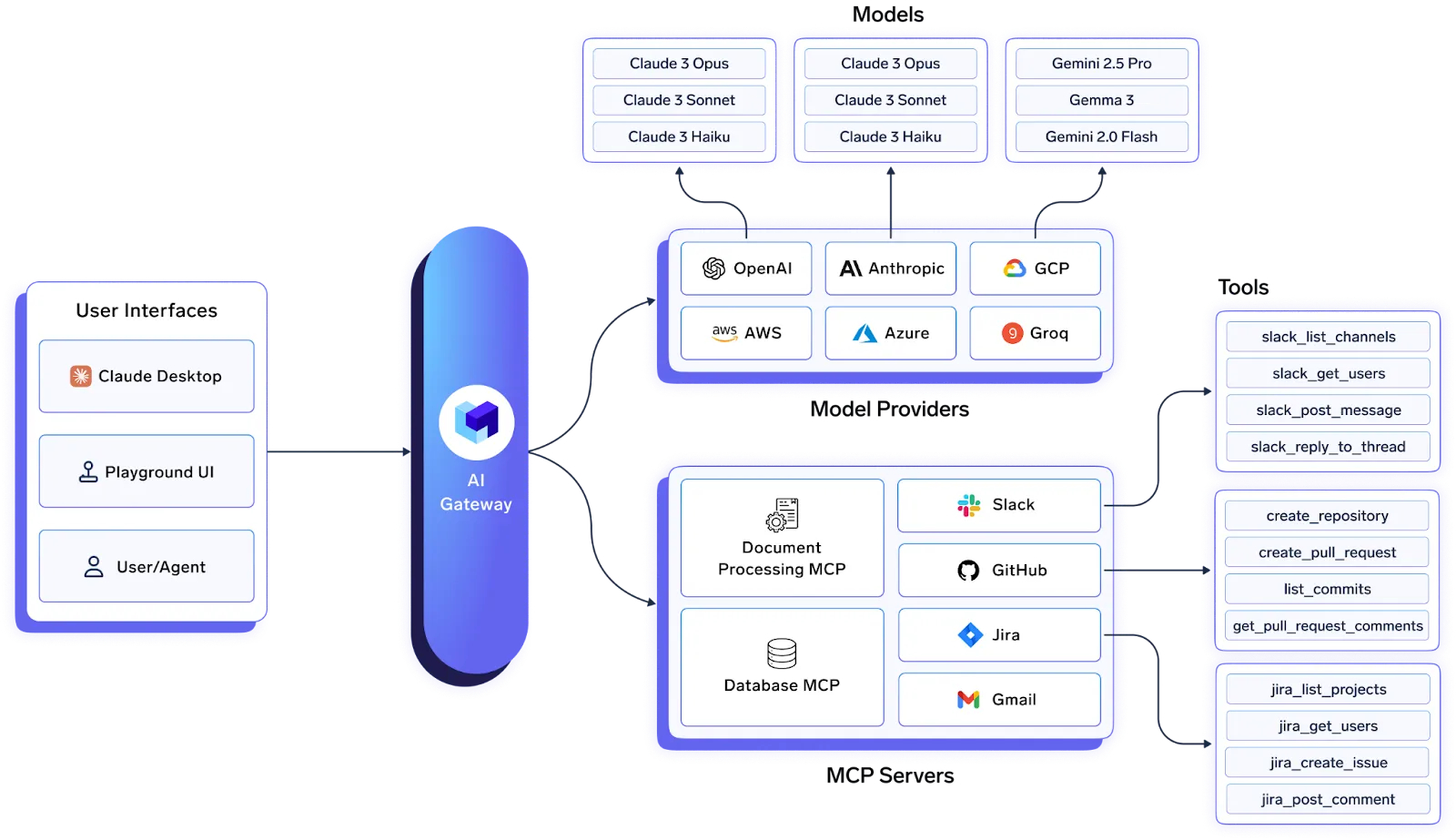

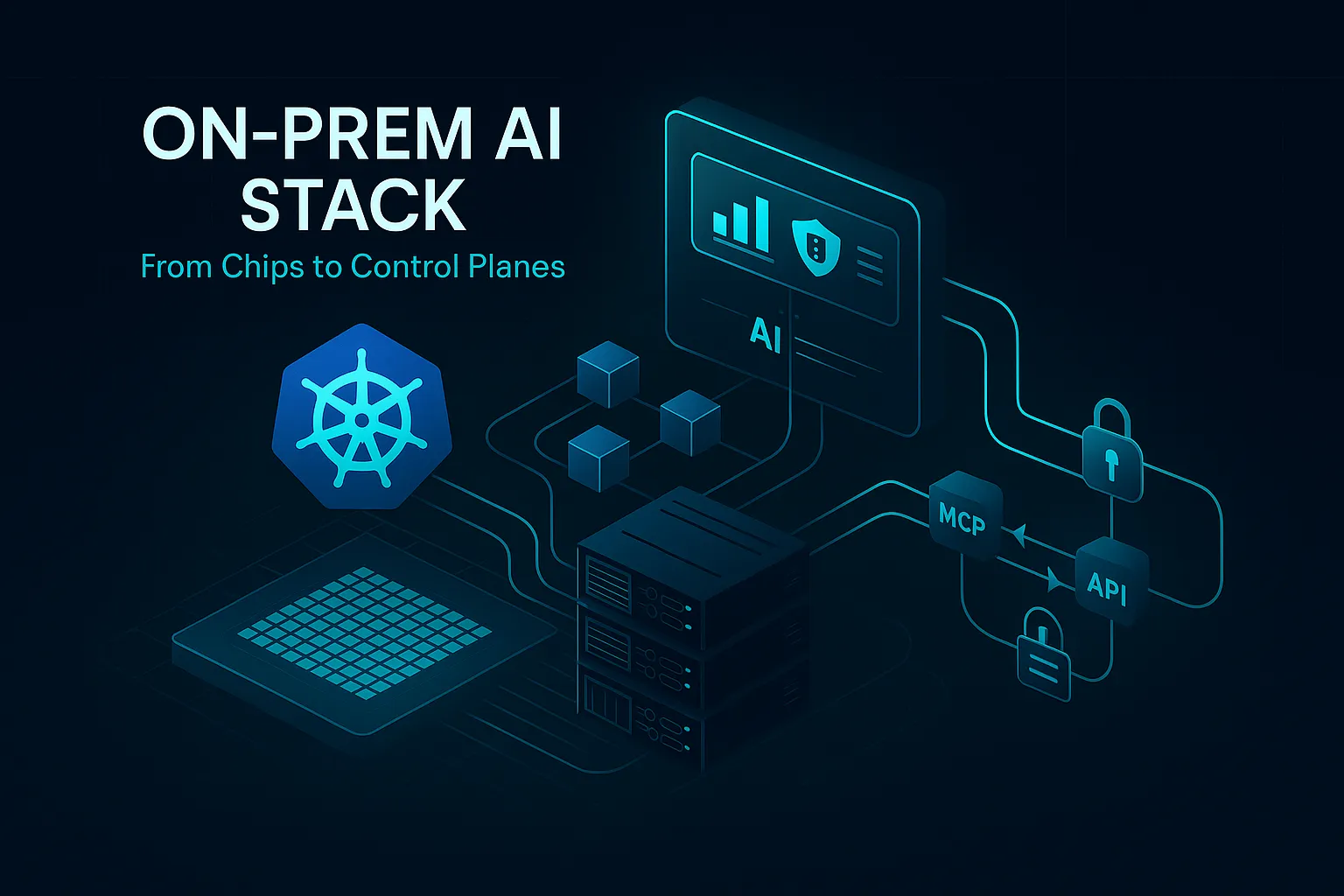

TrueFoundry’s MCP‑Enabled AI Gateway

TrueFoundry’s AI Gateway already provided a unified, low‑latency proxy for multiple LLM providers. Today we’re extending it to become the central MCP control plane inside your VPC—on any cloud or on‑prem.

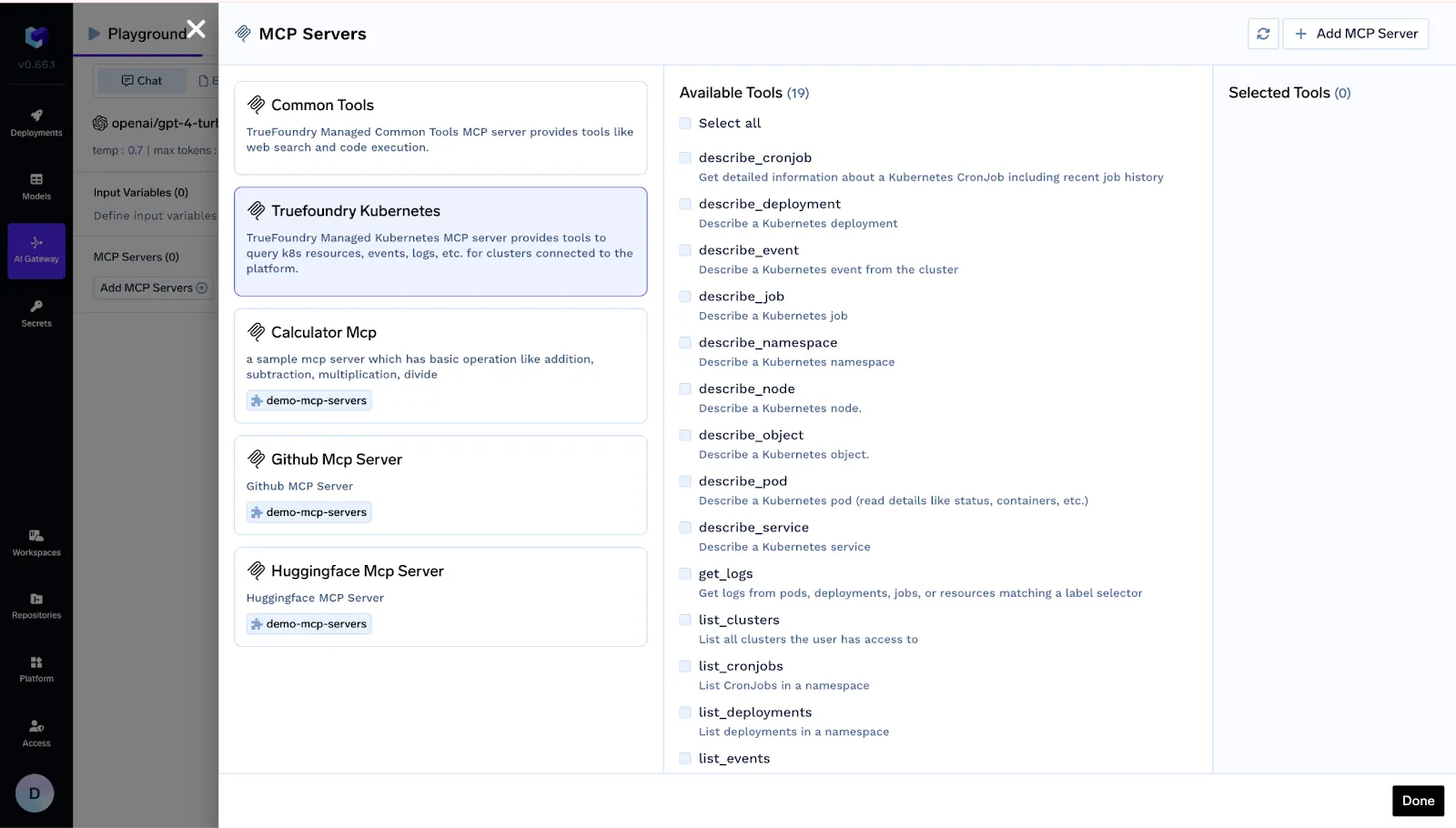

- Register public or self‑hosted MCP servers.

- Enforce OAuth‑backed auth so every tool call carries the user’s real identity.

- Apply org‑wide guardrails, rate limits and semantic caching.

- Trace every agent step for auditing and cost attribution.

Deep‑Dive: Key Gateway Capabilities

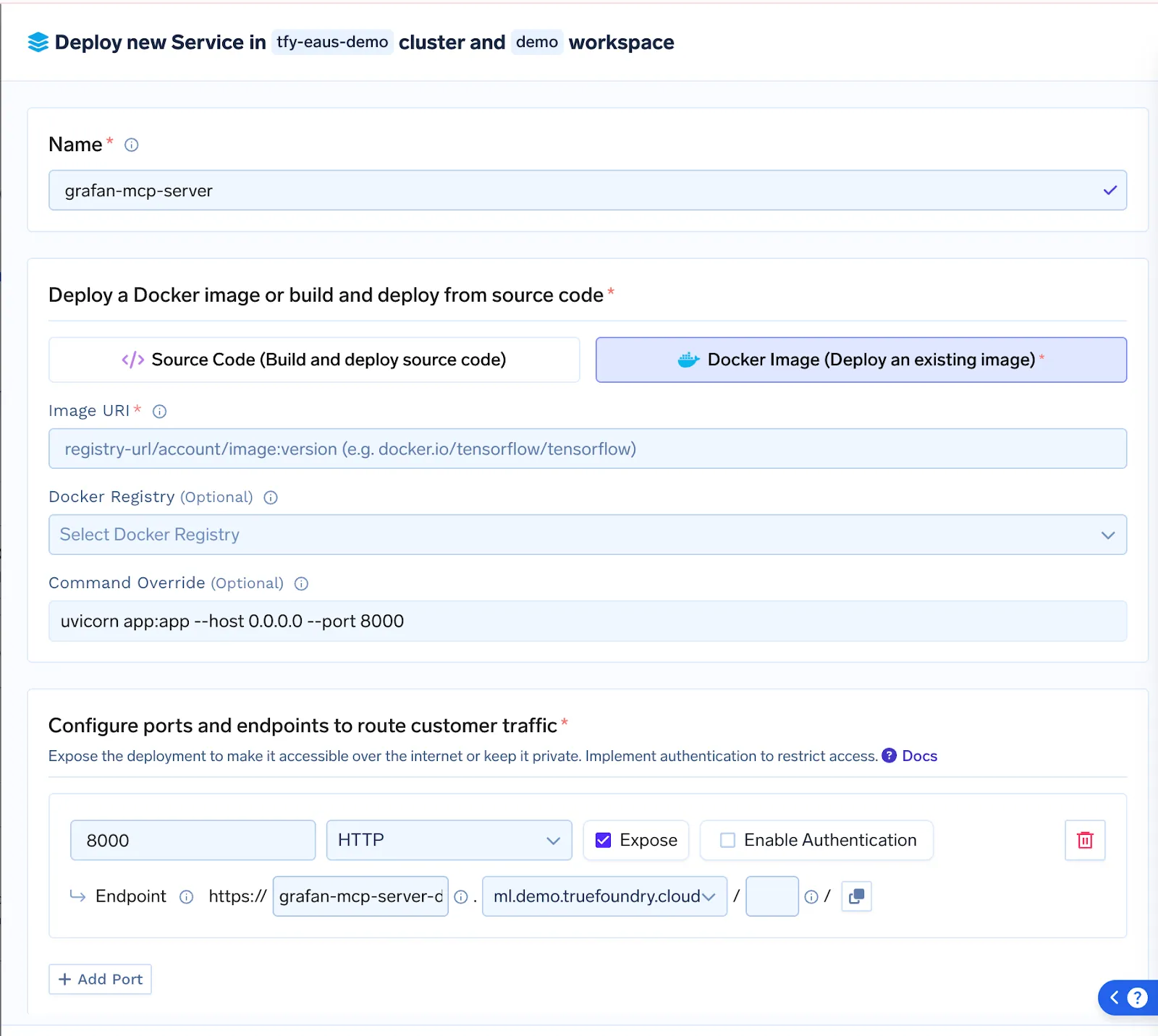

Effortless MCP Server Deployment : Ship servers as source or Docker; Gateway handles container build, rollout and auto‑scaling with zero downtime.

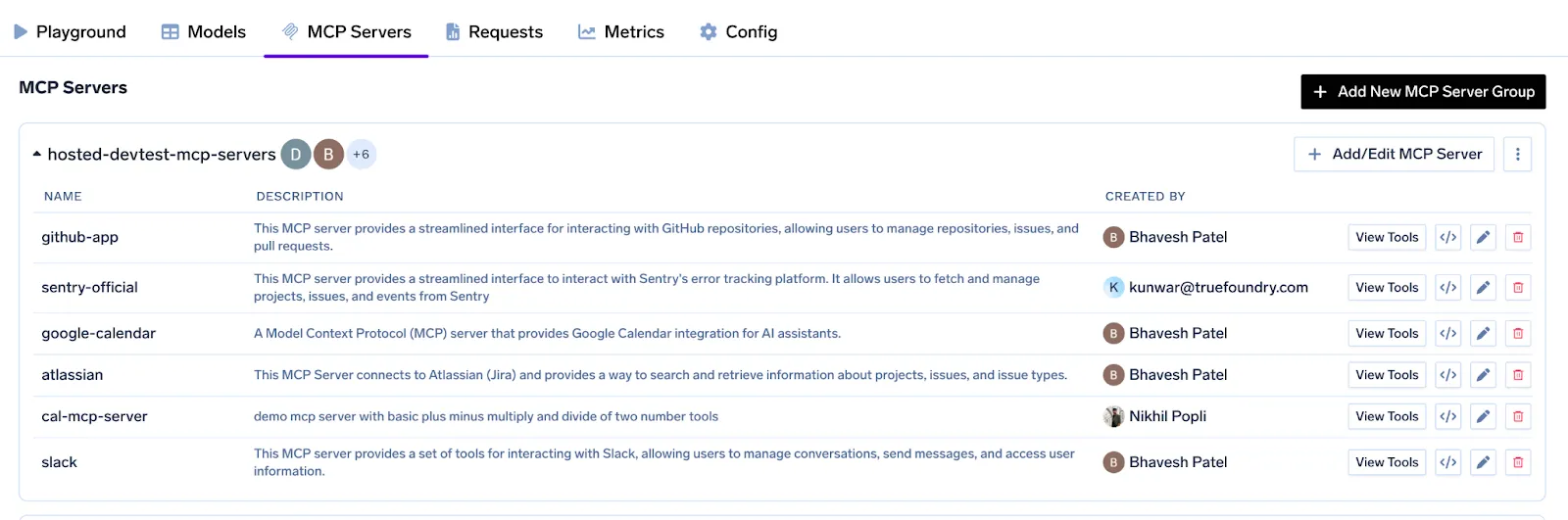

Central Registry & OAuth‑Backed Auth: A single pane lists Slack, GitHub, Salesforce—or your custom Postgres MCP—complete with token refresh logic and per‑user scopes.

Fine‑Grained Access Control : Assign servers (or individual tools) to specific teams, service‑accounts or environments so a staging agent can’t touch prod data.

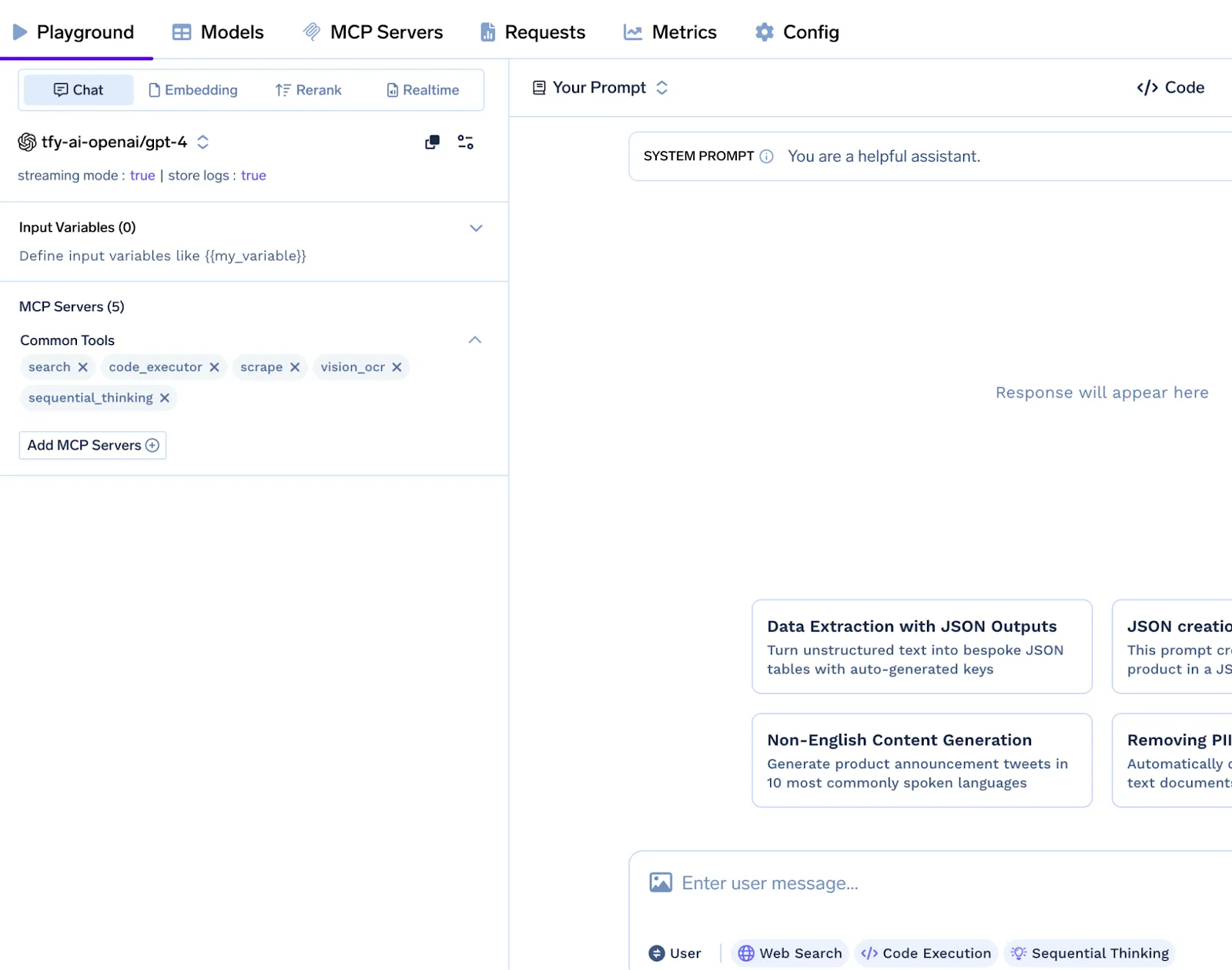

Agent Playground & Code Snippets : Spin up agents in minutes: pick tools, write a prompt, watch real‑time traces, then copy ready‑made Python snippets into your pipeline.

Guardrails, Observability & Rate‑Limiting: PII redaction, manual‑approval hooks, per‑tool quotas and full distributed traces

Real‑World Use‑Cases & Demos

Below are two hands-on examples we showed during the webinar. Each one follows a clear, step‑by‑step flow so you can picture what the agent is doing under the hood

1. Debugger Agent

Purpose — Automated triage and remediation of production failures.

Workflow:

- Accept a failing service URL from the user.

- Query the Kubernetes MCP to list active pods and stream real‑time logs.

- Parse the stack trace, then pivot to the GitHub MCP to pinpoint the exact file and line that triggered the error.

- Generate a fix on a new branch, push the commit, and open a Pull Request.

- Post the PR link to the designated Slack channel for rapid review.

2. Interview Assistant

Purpose — Deliver concise candidate briefs before interview panels meet.

Workflow:

- Scan tomorrow’s Google Calendar events whose titles contain “Interview.”

- Retrieve any attached resumes or linked Drive documents.

- Run each PDF through an OCR MCP to obtain clean text.

- Extract key details—current employer, total experience, and recent roles—while redacting personal identifiers.

- Publish a short summary in the private “Interview‑Prep” Slack channel so the panel walks in prepared.

System Architecture Overview

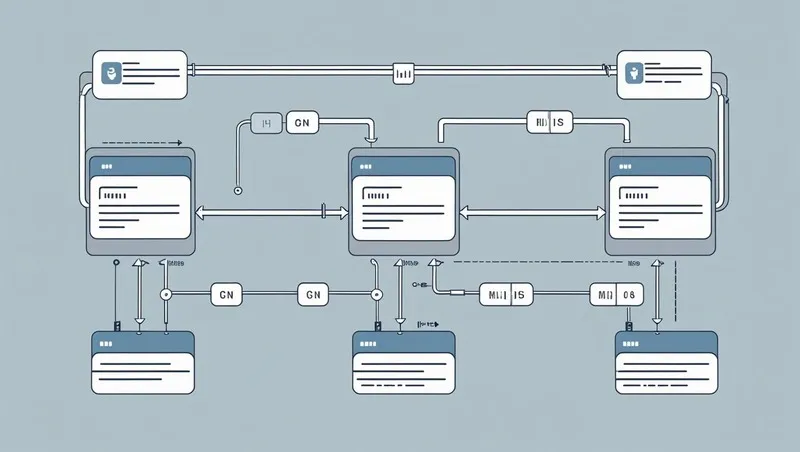

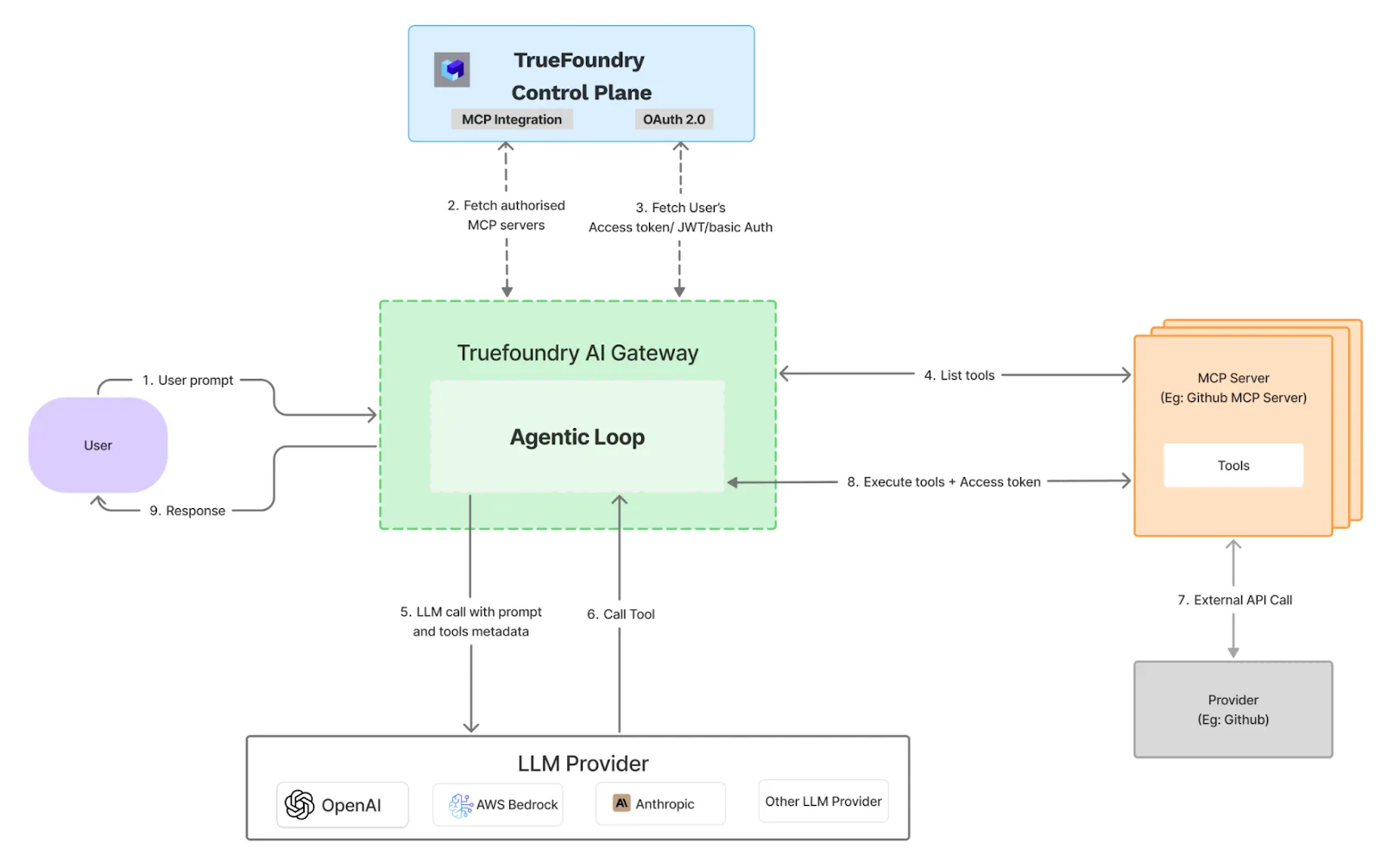

The Truefoundry AI Gateway is designed as a high-performance intermediary proxy that sits between the end-user's agentic loop and downstream services, including Large Language Model (LLM) providers and MCP servers. The system is designed for minimal latency by executing critical functions like authentication, rate-limiting, and guardrails in-memory. To maintain performance, logging and analytics data are processed asynchronously.

Request Lifecycle and Data Flow

The operational flow from user prompt to final response is executed in a precise, multi-step sequence:

1. Initial Request Ingestion

The Gateway receives an initial prompt from the user. Upon ingestion, the request is tagged with a unique identifier for tracing and immediately forwarded for processing.

2. Authentication and Authorization

The Gateway communicates with the Truefoundry Control Plane to perform two critical security functions:

- It fetches a list of authorized MCP servers that the user has permission to access based on predefined policies.

- It requests and receives fresh, short-lived access tokens for each of these authorized servers, ensuring that credentials have a limited exposure time.

3. Dynamic Tool Discovery

Using the newly acquired tokens, the Gateway queries each authorized MCP server to discover the set of available tools or functions. This process occurs in real-time for every user request, and the results are cached for the duration of that request to optimize performance.

4. Unified LLM Invocation

The Gateway constructs a single, comprehensive prompt for the designated LLM provider (e.g., OpenAI, AWS Bedrock). This prompt includes the original user query along with the metadata and schemas of all the discovered tools, enabling the LLM to generate a complete, multi-step execution plan.

5. Iterative Tool Execution

The Gateway then executes the plan generated by the LLM in a sequential, iterative loop:

- The Gateway invokes the selected tool on the appropriate MCP server, passing the necessary user-specific access token with the call.

- The result from the tool execution is returned to the Gateway.

- This output is then fed back into the context of the LLM for the next step of the plan.

- This cycle repeats until the LLM determines that the task is complete.

6. Encapsulated External API Interaction

All external API calls (e.g., to GitHub, Stripe) are handled exclusively by the MCP servers, not the Gateway.

7. Response Delivery and Asynchronous Logging

Once the execution loop is complete, the final response is transmitted back to the user. Concurrently, all relevant operational data—including traces, performance timings, and cost metrics—is sent to an asynchronous queue for ingestion by observability and analytics platforms. This ensures that logging does not introduce any latency into the user-facing response time.

Best Practices for Secure AI Gateway with MCP

A secure AI gateway acts as the protective layer between AI systems and enterprise data, applications, and users. Implementing the right practices ensures safe data flow, controlled access, and reliable AI operations while minimizing security and compliance risks.

- Enforce strong authentication and authorization: Use multi-factor authentication (MFA) and role-based access control (RBAC) to ensure only verified users and services can access the AI gateway.

- Apply data minimization principles: Allow AI systems to access only the data necessary for specific tasks to reduce exposure of sensitive information.

- Encrypt data in transit and at rest: Use modern encryption standards to protect data flowing through the gateway and stored within logs or caches.

- Implement real-time monitoring and alerts: Continuously monitor traffic, detect anomalies, and trigger alerts for suspicious activities or unusual data requests.

- Maintain detailed audit logs: Record all requests, responses, and data access events to support compliance, investigations, and accountability.

- Use content filtering and redaction: Automatically detect and mask sensitive data such as personal information, financial details, or credentials before it reaches AI models.

- Regularly update and patch systems: Keep gateway software, dependencies, and security controls up to date to prevent vulnerabilities.

- Set rate limits and usage controls: Prevent abuse, denial-of-service attempts, or excessive API usage by enforcing limits and quotas.

- Isolate environments and workloads: Separate development, testing, and production environments to reduce the risk of cross-environment data leaks.

- Conduct periodic security audits: Regularly review configurations, policies, and access controls to ensure ongoing compliance and effectiveness.

Getting Started & Beta Launch of MCP Gateway

The Beta launch of MCP Gateway is live on the Truefoundry Control Plane. Sign up for a three‑month trial, or book a personalised advisory session to benchmark cost, latency and architecture with our team.

Ready to turn siloed APIs into super‑powered agents? Create your free account and plug your first MCP server in minutes.

Conclusion

As enterprises scale agentic AI, a secure AI gateway with MCP becomes essential for connecting models to real business tools safely and reliably. MCP enables standardized tool access, while the gateway enforces governance, security, and observability across every interaction.

With centralized control, fine-grained permissions, and real-time monitoring, organizations can deploy powerful AI agents without compromising data protection or compliance. Adopting a secure AI gateway with MCP ensures scalable, trustworthy, and production-ready AI automation for the enterprise.

Book a demo to see how TrueFoundry’s AI gateway with MCP will work in your enterprise environment.

Frequently Asked Questions

What is an enterprise AI gateway with MCP?

An enterprise AI gateway with MCP is a centralized layer that connects AI models to enterprise tools and data using the Model Context Protocol. It manages authentication, access control, logging, and policy enforcement, ensuring secure, compliant, and observable AI interactions while enabling agents to safely discover and invoke approved business APIs.

How does MCP enhance an enterprise AI gateway?

MCP enhances an enterprise AI gateway by standardizing how AI models discover and use tools. It provides structured schemas, authentication details, and usage rules, enabling gateways to enforce policies, manage permissions, and monitor tool usage. This ensures consistent, secure, and scalable integration between AI agents and enterprise systems across environments.

What security features should an enterprise AI gateway with MCP include?

An enterprise AI gateway with MCP should include strong authentication, role-based access control, encryption, audit logging, real-time monitoring, data redaction, and rate limiting. It should also support policy enforcement, secure token management, and compliance controls to prevent unauthorized access, protect sensitive data, and ensure safe AI interactions with enterprise tools.

How do enterprises secure MCP tool access through AI gateways?

Enterprises secure MCP tool access by enforcing identity-based authentication, assigning role-based permissions, and issuing short-lived tokens for tool calls. AI gateways validate user context, restrict access to approved MCP servers, log all interactions, and apply guardrails such as approval workflows and data filtering to prevent misuse or unauthorized data exposure.

What are best practices for scaling an enterprise AI gateway with MCP?

Best practices include centralizing MCP server registries, implementing granular access controls, enabling observability and cost tracking, and automating policy enforcement. Organizations should isolate environments, use rate limits, maintain audit trails, and regularly review configurations. These measures ensure secure, efficient, and compliant scaling as AI adoption expands across teams and workflows.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

%20(28).webp)

.webp)

.webp)