Databricks and TrueFoundry Partnership

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

TrueFoundry AI Gateway registers Databricks Model Serving as a first class provider and routes inference traffic to it through the same OpenAI compatible endpoint that handles every other provider in your stack. The integration extends beyond model routing. TrueFoundry's MCP Gateway sits in front of Databricks managed MCP servers to enforce authentication and tool level access control and audit logging for agent workflows that query Unity Catalog.

This post walks through four integration points: the AI Gateway provider registration and routing layer for Databricks Model Serving endpoints. The MCP Gateway governance layer for Databricks managed MCP servers. The Virtual Model routing plane that enables multi provider failover between Databricks hosted models and commercial APIs. And the workflow orchestration layer that triggers Databricks jobs as native tasks inside TrueFoundry pipelines. Each section includes working configuration and enough architectural detail to evaluate whether this integration fits your stack.

How the AI Gateway Works Internally

TrueFoundry AI Gateway uses a split architecture. A control plane manages configuration including models and users and routing rules and rate limits. A gateway plane processes actual inference requests. The control plane stores its state in PostgreSQL and ClickHouse and syncs all configuration to the gateway pods via a NATS message queue. Updates propagate in real time without restarts.

The gateway plane is built on the Hono framework and performs all authentication and authorization and rate limiting checks in memory. A single gateway pod on 1 vCPU and 1 GB RAM handles 250+ requests per second with approximately 3 ms of added latency. The pod scales to 350+ RPS before CPU saturation. Horizontal scaling via additional pods extends throughput to tens of thousands of requests per second.

When a request hits the gateway the processing follows a strict sequence with no external calls in the hot path:

- The gateway validates the JWT token against cached public keys from your IdP. The keys are downloaded once and cached. No external auth call is required.

- It checks the in-memory authorization map to verify the user has access to the requested model. The entire user to model association map is synced via NATS and held in memory.

- It resolves the model identifier. If the identifier is a Virtual Model the gateway applies routing rules (priority based or weight based or latency based) entirely in memory.

- An adapter translates the request from OpenAI compatible format into the target provider's format.

- The request is forwarded to the provider and the response is streamed back to the client.

The only external call in this path is the actual LLM provider call. Logs and metrics are written to NATS asynchronously after the response completes. The gateway never fails a request even if the NATS queue is temporarily unreachable.

Databricks as a First Class Model Provider

You register Databricks Model Serving as a provider in the AI Gateway by providing authentication credentials and your workspace URL. TrueFoundry supports two authentication methods.

Service Principal authentication is the recommended approach for production. You provide the Client ID and OAuth Secret generated in your Databricks workspace settings under Service Principals. This uses the OAuth 2.0 Client Credentials flow.

Personal Access Token authentication works for development and testing. You provide a Databricks PAT directly.

In both cases you also provide the workspace base URL (for example https://<workspace_id>.cloud.databricks.com).

Once the provider is registered you add individual models. The Model ID in TrueFoundry must exactly match the serving endpoint name in your Databricks workspace. Any model served by Databricks becomes routable through the Gateway. This includes Foundation Models available through Databricks and custom fine tuned models from Mosaic AI and third party models accessible through Databricks Model Serving.

Using the Gateway in Your Application

Application code hits one URL regardless of the underlying provider. The Gateway translates between OpenAI compatible format and whatever the downstream provider expects.

from openai import OpenAI

client = OpenAI(

base_url="https://your-truefoundry-gateway.com/api/llm",

api_key="your-truefoundry-api-key"

)

# This request is routed through the Gateway to Databricks Model Serving.

# The application code does not know or care which provider serves it.

response = client.chat.completions.create(

model="databricks-main/custom-finetuned-llama",

messages=[{"role": "user", "content": "Analyze Q3 churn trends"}]

)

The model field uses the format <provider-account-name>/<model-id> where the model ID matches the Databricks serving endpoint name you configured.

Virtual Models for Multi Provider Routing

A Virtual Model is a logical model identifier that maps to multiple physical providers with routing rules. Your application calls a single model name and the Gateway handles target selection and failover automatically.

# This hits a Virtual Model. The Gateway resolves it to the best

# physical provider based on your routing configuration.

response = client.chat.completions.create(

model="production-assistant",

messages=[{"role": "user", "content": "Summarize this contract"}]

)

TrueFoundry supports three routing strategies for Virtual Models.

Weight based routing distributes traffic by assigned percentages across targets. You can configure 80% to your Databricks hosted fine tuned model and 20% to Claude for comparison testing. Weight based routing also supports sticky routing that pins sessions to a target using request headers or metadata for multi-turn conversation consistency.

Priority based routing sends all traffic to the highest priority healthy target (priority 0 is highest). If that target fails or is unavailable the gateway falls back to the next priority. This supports an optional SLA cutoff that monitors average time per output token over a 3-minute rolling window and marks targets unhealthy when they breach a configured threshold.

Latency based routing automatically routes to the target with the lowest recent latency. The gateway uses time per output token (inter-token latency) as the metric. It considers requests from the last 20 minutes with a maximum of 100 samples. If fewer than 3 requests exist for a target it is treated as the fastest to gather more data. Targets are considered equally fast if their latency is within 1.2x of the fastest to avoid rapid switching.

Failover and Health Detection

The gateway continuously monitors every target and marks targets as unhealthy when failures cross a threshold. The error responses tracked include 5xx and 429 and 401 and 403 status codes. The default failure threshold is 2 or more failures within a 2-minute rolling evaluation window. When a target is marked unhealthy it is moved to the end of the routing list and healthy targets are always tried first. Recovery is automatic once errors age out of the evaluation window.

Each target also supports per-target retry configuration. The defaults are 2 retry attempts with 100 ms delay between retries. Retry is triggered on 429 and 500 and 502 and 503 status codes. Fallback to a different target triggers on 401 and 403 and 404 and 429 and 500 and 502 and 503.

Observability Across Providers

Every request through the Gateway is traced with full attribution: which user and which model and which provider and request latency and token count and estimated cost. The gateway is OpenTelemetry compliant and exports traces asynchronously via NATS to a configurable OTEL endpoint (gRPC or HTTP). You can route these to whatever observability stack you run. This gives you a single dashboard for all providers rather than stitching together provider specific metrics.

MCP Gateway for Agent Workflows

Databricks launched managed MCP server support in mid 2025. These servers enable agents to securely access Unity Catalog resources through the Model Context Protocol. Databricks provides managed servers for Genie (structured data access via natural language) and Vector Search (unstructured data from vector indexes) and UC Functions (custom functions registered in Unity Catalog). Unity Catalog permissions are enforced automatically so agents can only access tools and data their identity is authorized for.

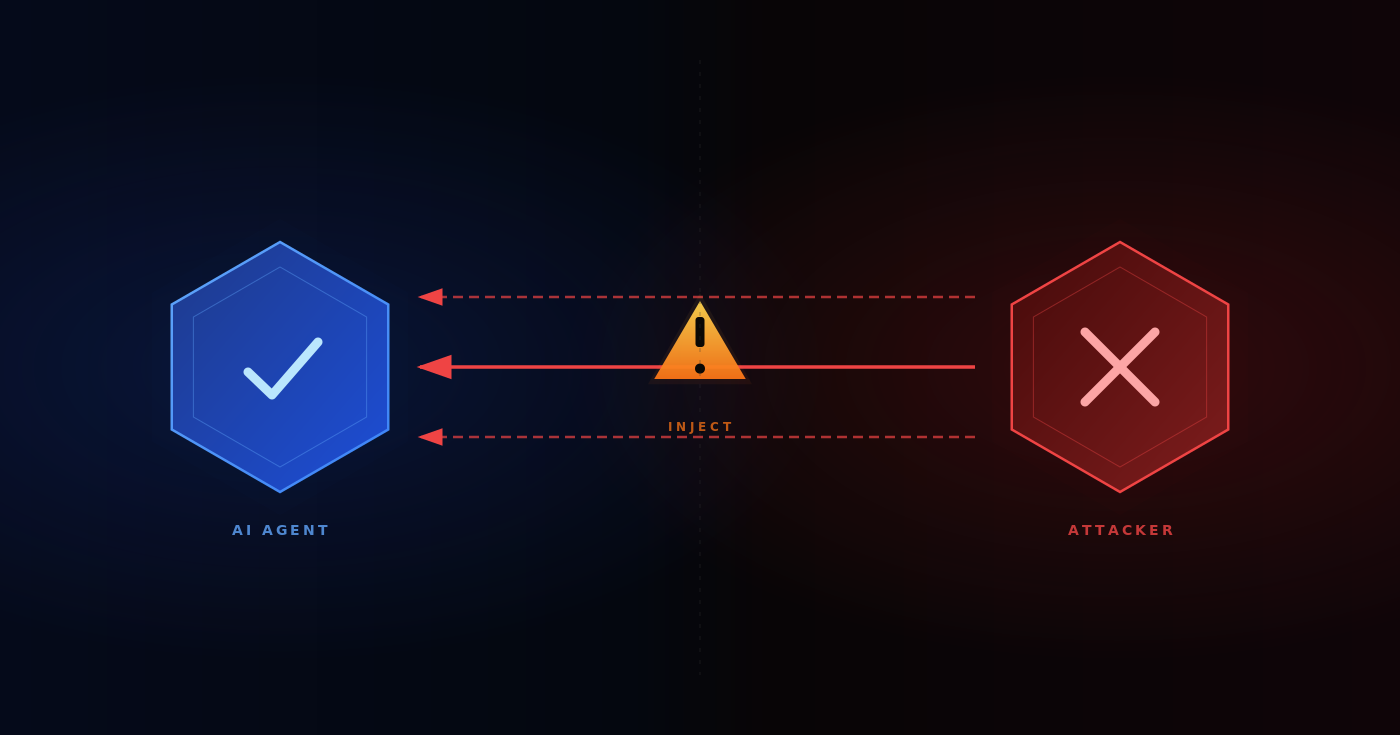

The governance challenge is what happens between the agent and those MCP servers. Without a control plane each developer configures their own connections and manages their own credentials and creates their own tool policies. There is no centralized audit trail of which agent called which tool. No way to enforce least privilege access across teams. No central place to revoke access when someone leaves.

How the MCP Gateway Solves This

TrueFoundry MCP Gateway sits between your agents (Claude Code and Cursor and custom agent frameworks) and your MCP servers (including Databricks managed MCP servers). It acts as a reverse proxy with authentication and authorization and audit logging.

Authentication flow. Agents authenticate once to the MCP Gateway using a TrueFoundry API key or an external IdP token (Okta and Azure AD and Auth0 are supported). The Gateway handles outbound authentication to each downstream MCP server. For Databricks this means the Gateway holds the Service Principal OAuth credentials or PAT and individual agents never touch raw Databricks credentials.

Tool level access control. The Gateway lets you selectively enable or disable individual tools per team. You can also aggregate tools from multiple MCP servers into a Virtual MCP Server that exposes only a curated subset. For example your data science team might get access to Genie and Vector Search from Databricks plus a web search server while your engineering team gets a different tool set.

Guardrails. The Gateway supports guardrails at four hooks. MCP Pre Tool guardrails run before the tool is called and can validate SQL queries and check for sensitive data and enforce permission policies. If any pre-tool guardrail fails the tool never executes. MCP Post Tool guardrails inspect and optionally rewrite tool outputs before returning results to the model. You can configure these to scan for PII and secrets in results. User approval workflows can be configured for high risk operations. Three enforcement strategies are available: Enforce (block on violation or guardrail error) and Enforce But Ignore On Error (block on violation but allow on guardrail service error) and Audit (log only and never block).

Audit trail. Every tool invocation is traced with the calling user and the MCP server and the specific tool and request payload and response payload and latency. This exports via OpenTelemetry alongside your LLM request traces giving you one unified log of everything your agents do.

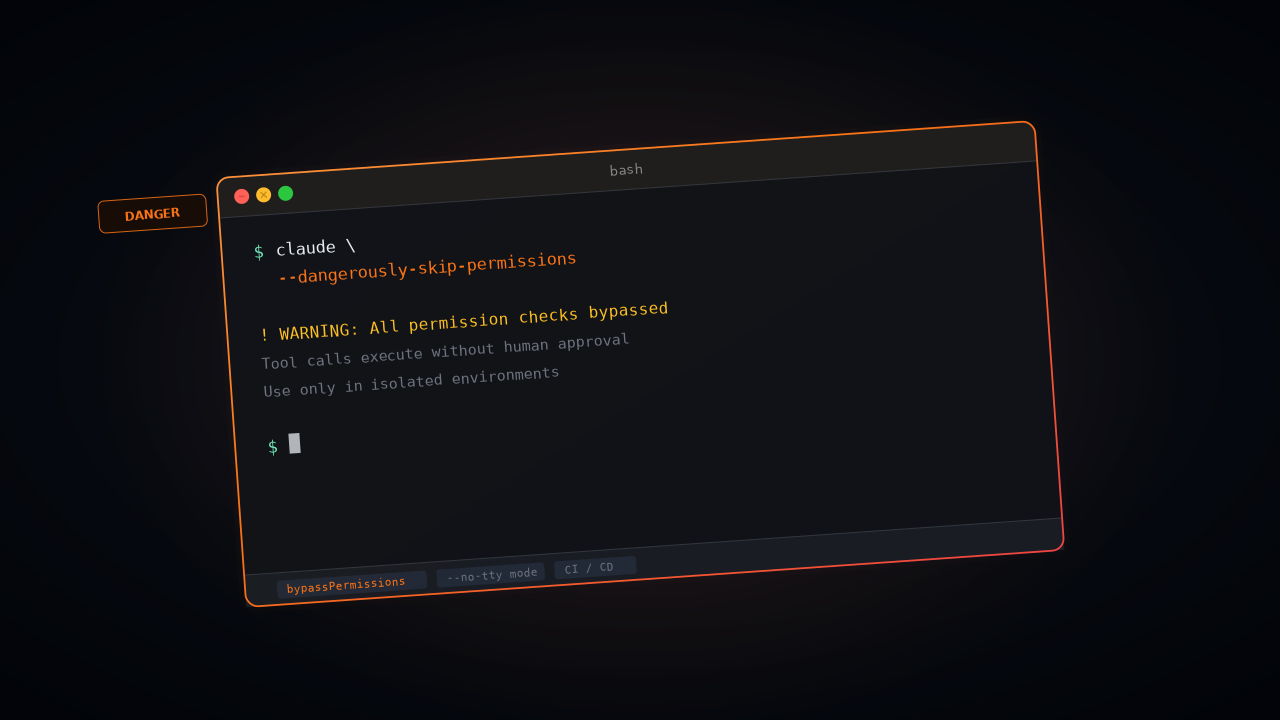

Configuring Claude Code for Enterprise MCP Access

If your developers use Claude Code you configure the MCP Gateway as a remote MCP server. Enterprise governance requires two separate configuration files deployed via MDM on corporate devices.

The managed-settings.json file controls which MCP servers Claude Code is allowed to connect to:

{

"allowedMcpServers": [

{ "serverUrl": "https://mcp-gateway.your-company.com/*" }

],

"strictKnownMarketplaces": []

}

Setting strictKnownMarketplaces to an empty array blocks all marketplace sourced MCP installations. Combined with allowedMcpServers this creates a locked down configuration where agents can only access tools through your governed Gateway.

The managed-mcp.json file defines the actual MCP server connections. Deploy this via MDM to the system level path (/Library/Application Support/ClaudeCode/managed-mcp.json on macOS or /etc/claude-code/managed-mcp.json on Linux):

{

"databricks-unity-catalog": {

"type": "http",

"url": "https://mcp-gateway.your-company.com/mcp/v1/databricks-uc/mcp"

},

"databricks-sql": {

"type": "http",

"url": "https://mcp-gateway.your-company.com/mcp/v1/databricks-sql/mcp"

}

}

When managed-mcp.json is deployed via MDM it takes exclusive control. Developers cannot add or use MCP servers beyond what is defined in this file. Access control decisions happen at the Gateway so you only need to update the MDM deployed file when adding or removing entire server integrations.

For a comprehensive guide on securing Claude Code in enterprise environments including MDM deployment scripts and sandbox enforcement and the full managed settings schema see the Enterprise Security for Claude documentation.

Mosaic AI Models as Routed Providers

This integration point is specifically for teams that fine tune models on Databricks using Mosaic AI Training and want to serve them alongside commercial API models through a single endpoint.

The setup is as follows. You fine tune and deploy the model through Databricks Model Serving so it is available as a serving endpoint. You register Databricks as a provider in the AI Gateway as described above. You create a Virtual Model with priority based routing that tries your Databricks hosted model first and falls back to a commercial API:

routing_config:

type: priority-based-routing

load_balance_targets:

- target: databricks-main/custom-finetuned-llama-3

priority: 0

- target: anthropic-main/claude-sonnet-4-5

priority: 1

All traffic goes to your Databricks hosted custom model by default. If Databricks returns a server error or times out or hits a rate limit the Gateway automatically retries on Claude Sonnet. The fallback status codes that trigger this behavior are 401 and 403 and 404 and 429 and 500 and 502 and 503. The application code never knows the failover happened. It sees a successful response from the Virtual Model identifier.

This pattern is useful during model evaluation. You can run both providers in parallel using weight based routing with a 50/50 split and log the responses and compare quality before committing to the fine tuned model for all traffic. The Gateway's per request traces include the resolved provider that served each response (returned in the x-tfy-resolved-model response header) so you can filter and analyze by provider in your observability stack.

Databricks Jobs in TrueFoundry Workflows

TrueFoundry Workflows are built on Flyte and provide a way to orchestrate multi step pipelines as directed acyclic graphs of tasks. Each task runs in its own container with defined resources and dependencies. The Databricks integration adds a native task type that triggers existing Databricks jobs from within these workflows so that Spark based data processing and model training can be composed alongside TrueFoundry native tasks like model deployment and evaluation.

How the Databricks Task Works

A Databricks task uses the standard @task decorator with a DatabricksJobTaskConfig that specifies the Databricks workspace and job to trigger. Under the hood the task calls the Databricks Jobs API run_now() endpoint with an idempotency token derived from the Flyte execution ID. This ensures the same logical workflow run never submits duplicate Databricks jobs even if the task pod retries.

from truefoundry.workflow import (

DatabricksJobTaskConfig,

TaskPythonBuild,

task,

workflow,

)

@task(

task_config=DatabricksJobTaskConfig(

image=TaskPythonBuild(

pip_packages=["truefoundry[workflow]"],

),

workspace_host="https://<your-workspace>.cloud.databricks.com",

service_account="flyte-databricks-sa",

job_id="123",

timeout_seconds=2000,

)

)

def run_databricks_training():

print("Databricks training job complete")

@task

def deploy_model():

# Deploy the trained model as an API endpoint

pass

@workflow()

def train_and_deploy():

run_databricks_training()

deploy_model()

The task process itself runs in a lightweight container defined by the image field. The actual job execution happens entirely in Databricks. By default the task polls until the Databricks run terminates or until timeout_seconds elapses. If the timeout is reached the Databricks run is cancelled and a RuntimeError is raised. If you set skip_wait_for_completion to True the task returns immediately after triggering the job without waiting for it to finish.

Configuration Surface

The DatabricksJobTaskConfig accepts the following fields.

Authentication

The task supports two authentication methods. Personal Access Token authentication works when DATABRICKS_PERSONAL_ACCESS_TOKEN is set in the task's environment. You inject the PAT via the env field in the task config using a secret reference so that the token is never hardcoded.

OAuth token federation is the alternative when no PAT is set. This requires DATABRICKS_SERVICE_PRINCIPAL_CLIENT_ID in the environment and a Kubernetes service_account on the task pod. The Kubernetes service account token is exchanged for a Databricks access token via the workspace OIDC endpoint. The Databricks workspace must have OIDC federation configured for this path to work.

OAuth token federation avoids storing long lived Databricks credentials entirely. The Kubernetes service account token is short lived and scoped to the task pod. The OIDC exchange happens at runtime so there are no secrets to rotate or manage.

Where This Fits

The typical use case is a pipeline where data preparation and model training run in Databricks (because that is where your Delta Lake data lives and where your Spark clusters are provisioned) and downstream steps like model registration and deployment and evaluation run in TrueFoundry. The Databricks task bridges the two environments within a single workflow definition. You get Flyte's dependency resolution and retry semantics and caching across the entire pipeline rather than stitching together separate orchestration systems.

Architecture Summary

The integration between Databricks and TrueFoundry operates at three layers. The AI Gateway layer registers Databricks Model Serving as a provider and routes inference traffic through the same unified endpoint that handles all other providers. Virtual Models enable multi provider routing with automatic failover between Databricks hosted models and commercial APIs. The MCP Gateway layer sits in front of Databricks managed MCP servers and centralizes authentication and tool level access control and audit logging for agent workflows. The Workflow layer triggers Databricks jobs as native tasks inside Flyte based pipelines so that Spark workloads compose with TrueFoundry deployment and evaluation steps in a single DAG.

No sidecars are required. No SDK changes are needed in application code. The Gateway's adapter layer translates between OpenAI compatible format and Databricks Model Serving format transparently. The only configuration required is provider registration with authentication credentials and model endpoint mapping.

The design that makes this integration clean is the zero external call architecture of the gateway plane. All authentication and authorization and routing decisions happen against in-memory state synced via NATS. The only network hop added to any request is the gateway pod itself which adds approximately 3 ms of latency. Everything else including rate limiting and budget enforcement and health tracking and telemetry collection happens asynchronously without touching the request path.

For the full AI Gateway documentation see the TrueFoundry Docs. For Databricks provider setup see Databricks Models. For MCP Gateway configuration see MCP Gateway Overview. For workflow integration see Creating A Databricks Task.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)