How to Host an AI Hackathon Without Losing Control of Your Keys or Budget: The TrueFoundry Architecture

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

The Midnight Hackathon Meltdown

It is your company’s internal AI hackathon, and one participant’s coding agent gets stuck in an unintended retry loop. It keeps firing long-context requests to a high-cost model for hours.

Because the organizers handed out raw provider keys to every participant, there are no team-level controls on spend or request velocity. By Monday morning, one runaway workflow had consumed a huge chunk of the shared LLM budget and pushed the organization into rate-limit pain.

That story lands because it is plausible. But the real lesson is broader: the right enterprise pattern for a hackathon is not distributing raw provider credentials and hoping teams behave. It is routing every request through a governed gateway that can separate teams, attach policy to metadata, and keep experimentation inside a controlled operating model.

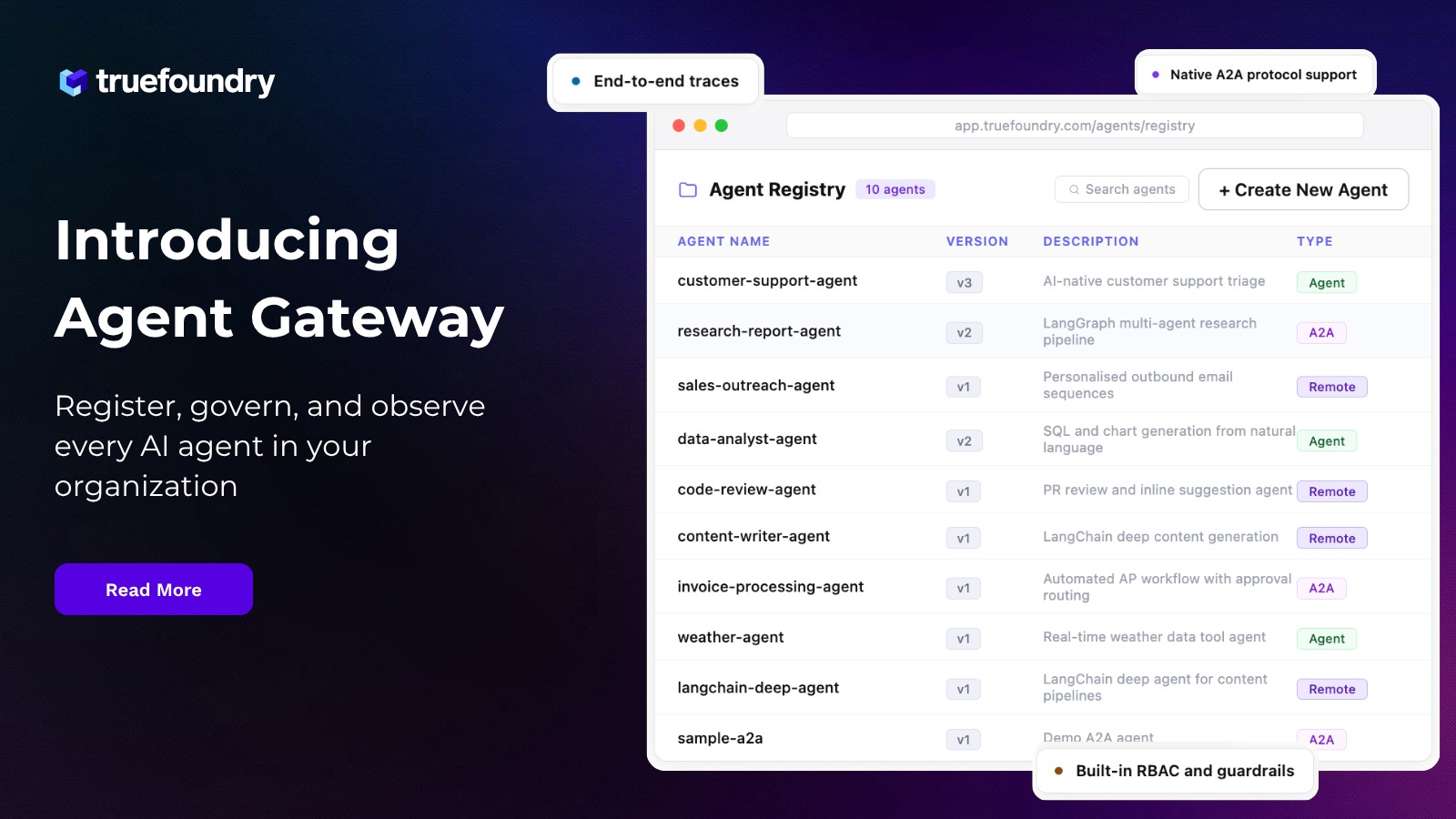

TrueFoundry is a strong fit for that pattern because it combines Kubernetes-native workspace boundaries, secret indirection, metadata-aware policy controls, agent guardrails, and a gateway-native playground. The more precise claim is not that it guarantees ‘zero leaks’ or perfect hard-stop accounting under every burst pattern. The stronger and more defensible claim is that it gives platform teams a credible control plane for running hackathons without turning them into unmanaged cost and security events.

1. Keep provider credentials abstracted from participants

The first rule of a secure hackathon is simple: participants should never need to see the raw provider API keys. Once a key is copied into notebooks, local environments, or agent config files, it becomes both a security problem and a billing problem.

TrueFoundry’s workspace model helps here because workspace isolation maps to Kubernetes namespace boundaries. In practice, that means workloads for one workspace run in a different namespace than workloads in another workspace, and provider credentials can be exposed through secret groups and secret FQNs rather than pasted directly into app manifests or source files.

That is the right architecture for hackathon teams. Give each squad a workspace, give workloads access only to the secret groups they need, and keep the actual provider credential under platform control the entire time. The user experience is still simple, but the blast radius is smaller and auditable.

- Use one workspace per team or per track, not one shared workspace for the whole event.

- Expose model access through managed credentials and gateway endpoints, not raw OpenAI or Anthropic keys.

- Treat secret groups as the credential boundary and workspace membership as the access boundary.

2. Enforce budget and rate policy from request metadata, not manual spreadsheets

The most important operational question in an AI hackathon is not whether you can see spending after the fact. It is whether the platform can evaluate policy on the request path before a runaway workload gets expensive.

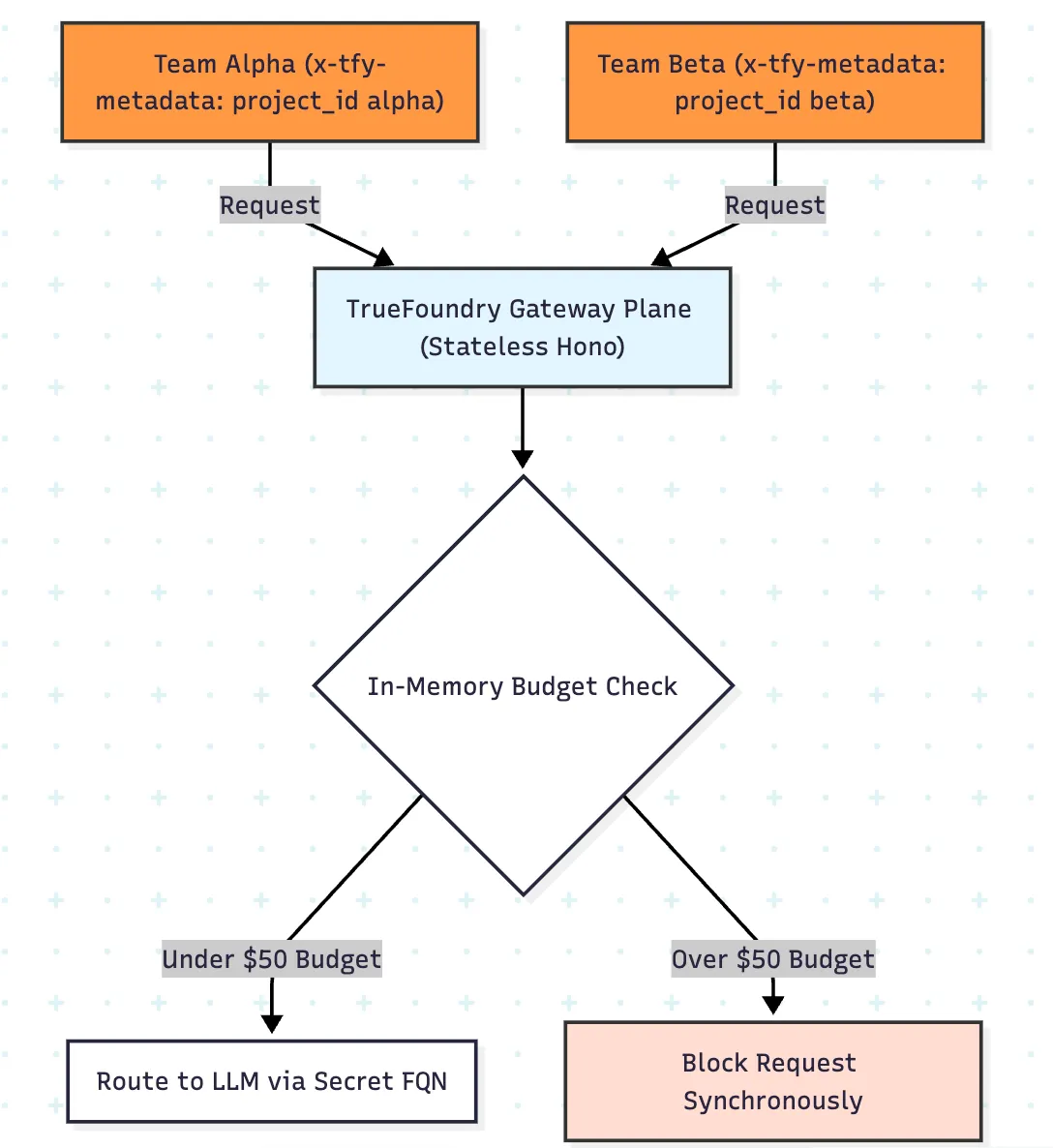

TrueFoundry’s gateway plane evaluates authentication, routing, guardrails, rate limits, and budget policy on the hot path using in-memory state, which enables low-latency enforcement before model invocation. That is materially better than a design where the only reliable cost view arrives after logs are processed downstream.

The especially useful part for hackathons is metadata scoping. Instead of hand-authoring one rule per team, you can attach team identity in x-tfy-metadata and apply policy dynamically with fields such as metadata.project_id. That means one budget rule and one rate-limit rule can fan out into isolated counters and spend envelopes per team.

- Per-team budgets stop one agent loop from eating the entire event budget.

- Per-team rate limits prevent a single squad from exhausting shared provider throughput.

- Metadata-based policy scales operationally better than maintaining dozens of static rule variants.

3. Guard hackathon agents with a four-hook control model

Hackathons are where teams try MCP servers, tool-calling agents, database connectors, and internal APIs. That is exactly where a traditional LLM-only security model starts to break down.

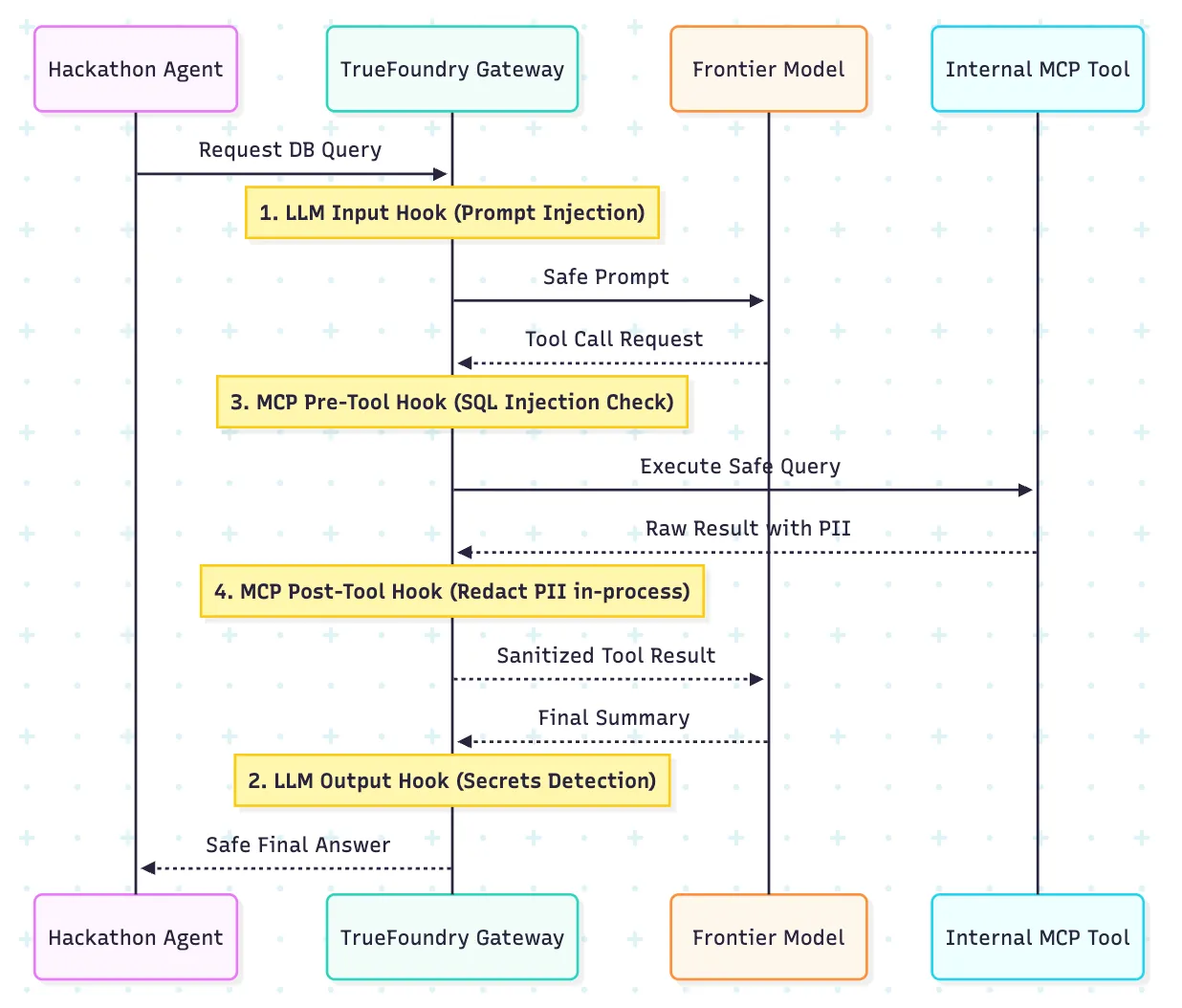

TrueFoundry’s guardrail model is especially relevant here because it exposes four execution points: LLM input, LLM output, MCP pre-tool, and MCP post-tool. That gives platform teams a more operational way to govern agents than relying on a single generic filter in front of the model.

The useful distinction is that different risks show up at different stages. Prompt injection may appear on the way in. Unsafe tool arguments appear before execution. Sensitive records may appear only after the tool returns. A four-hook model lets you place the right control at the right point in the flow.

- Hook 1: LLM input — inspect prompts before model invocation for policy violations, prompt-injection patterns, or obvious secrets and sensitive context.

- Hook 2: LLM output — inspect model responses before they return to the user or the next step in the chain, so policy violations or leaked secrets can be filtered early.

- Hook 3: MCP pre-tool — validate tool parameters, permissions, and high-risk actions before execution, such as destructive SQL, broad file access, or calls to sensitive internal systems.

- Hook 4: MCP post-tool — inspect tool results before they flow back into model context, so PII, secrets, or internal-only data can be redacted or blocked before the agent continues.

This is also where in-process detection matters. If secret scanning and related checks can run inside the gateway path without an extra outbound dependency, the control model is easier to reason about during a live event. Keep baseline guardrails common across teams, then layer stricter policies for teams using sensitive tools or datasets.

4. Let teams iterate fast, but only through the governed path

A secure hackathon still has to feel fast. If teams need a ticket every time they want to try a prompt, they will route around the platform. The answer is not less control. The answer is making the controlled path the easiest path.

This is where the gateway-native playground matters. The useful architectural point is that test traffic can flow through the same gateway plane used for production policies, so teams can validate prompts, routing, and guardrails in-loop rather than discovering policy behavior only after deployment.

The developer experience also gets better when the platform exposes response-level debugging signals. Headers such as x-tfy-resolved-model and x-tfy-applied-configurations, plus server-timing breakdowns, help teams understand what actually happened on a test request instead of guessing whether a fallback, guardrail, or routing rule fired.

- Use the playground as the official testing surface during the event.

- Teach teams to read resolved-model and applied-configuration signals, not just the model output.

- Make the code snippet copied from the tested configuration the default starting point for every team.

5. Be precise about residency, deployment, and observability

Enterprise readers will push back immediately if a post over-promises on data residency. They should. The useful claim is not that every deployment is magically ‘air-gapped.’ It is that the split-plane design lets teams run the gateway plane on their own infrastructure while keeping the hot path for inference, policy checks, and model access under tighter operational control.

The other half of the story is observability. A hackathon is easier to manage when the platform team can see traces, latency, and policy behavior quickly. But observability is also a data-governance surface. If prompt or response data is exported for analytics, that needs to be an intentional choice with the right retention and destination controls.

The residency story gets stronger when you describe deployment mode, logging behavior, and export paths explicitly. That builds more trust than saying ‘zero leak’ and hoping the reader does not ask follow-up questions.

A better operating model for hackathon owners

Yes - adding an explicit owner workflow is a good idea. It turns the post from architecture commentary into an execution guide.

1. One week before the event: define the control model

Create one workspace per team or per competition track. Decide the allowed models, the default provider path, the per-team budget, the per-team rate limit, and which teams may use MCP tools or sensitive internal data.

2. Before kickoff: preload the safe path

Publish a small starter kit for participants: the gateway endpoint, the required metadata shape, example SDK snippets, and a short guide to the playground. Teams should begin from the governed path, not from raw provider dashboards.

3. At registration: assign every team a project_id

Make project_id the required metadata field from day one. That gives you clean spend segmentation, cleaner tracing, cleaner incident review, and less manual mapping later.

4. During build hours: monitor the event like a live system

Watch team-level spend, rate-limit pressure, and unusual trace patterns. The goal is to rescue teams early, not only to analyze failures later.

5. For agent teams: require tool review before broad access

If a team wants database access, MCP servers, or internal APIs, move them onto a stricter guardrail profile before you enable those tools. Agent experiments should graduate into more trust, not start there.

6. Before demos: force a final playground pass

Have each team validate its final flow through the playground or the official test surface. That catches missing metadata, unexpected routing, and guardrail surprises before demo time.

7. After the event: turn observations into platform defaults

Review the traces, budget incidents, blocked calls, and support questions. Then convert the best practices into default workspace templates, snippets, and policy baselines for the next hackathon.

The final verdict

The core thesis of the original post still works: if you are running an enterprise AI hackathon, the safest pattern is not handing out raw provider keys. It is routing requests through a gateway that can separate teams, meter spend, control throughput, and govern agent workflows.

What makes the revised version better is that it says this in a way a skeptical buyer can believe. TrueFoundry’s strongest hackathon story is not a vague promise of total safety. It is a practical combination of workspace isolation, secret indirection, metadata-scoped policy, governed agent hooks, request-path controls, and a playground that helps teams test through the same policy surface they will ship through.

That is enough. Your hackers still get to build the future. Your platform, security, and finance teams just do not have to lose a weekend in the process.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

%20(28).webp)

.webp)

.webp)