RAG Architecture Explained: Building reliable LLM Systems with Retrieval

.webp)

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

Large language models (LLMs) are excellent at generating fluent responses, but they come with important limitations. Their knowledge is fixed at the time of training, which means they can produce outdated information. They may also hallucinate, generating confident but incorrect answers. Simply adding more text during interaction doesn’t help them truly learn new facts.

To address this, Retrieval Augmented Generation (RAG) introduces a more reliable approach by fetching relevant, up-to-date information before generating a response. This helps ground the model’s outputs in real, verifiable data.

In this blog, we explore what RAG architecture is like, how it works, and the key design decisions that determine its effectiveness.

What is RAG architecture?

.webp)

Retrieval augmented generation (RAG) is an architectural approach that improves an artificial intelligence (AI) model's performance by linking it to external knowledge bases like internal organizational data, journals, and specialized datasets.

RAG architecture enables Large Language Models (LLMs) to provide more relevant, higher-quality responses. Instead of relying solely on static training data, RAG retrieves relevant documents at query time and supplies them to the model as context.

At a high level, RAG helps with:

- Reducing Hallucinations

- Providing up to date responses

- Enables domain-specific knowledge without fine-tuning

What are the components of RAG architecture?

A Retrieval Augmented Generation (RAG) architecture is built around a few core components that work together to produce accurate, context-aware responses.

Retriever: The retriever is responsible for searching external data sources, such as documents or databases, to find information relevant to the user’s query. It ensures the system pulls in the most useful context before generating a response.

Generator: The generator is the LLM that takes both the original query and the retrieved context to produce a grounded and coherent answer. This step reduces hallucinations and improves factual accuracy.

Vector Database: A vector database stores data as embeddings (numerical representations of meaning). It enables fast, semantic search, allowing the retriever to efficiently find the most relevant information even when exact keywords don’t match.

High level RAG architecture overview

.webp)

A typical RAG architecture consists of four major steps: document ingestion, embedding and indexing, retrieval, and generation. While the overall flow appears to be simple, each layers has its own trade-offs which directly impacts response quality, latency and cost.

Document Ingestion and Chunking

Before retrieval, raw documents must be split into chunks for effective searching. Chunk size, overlap strategy, where a small portion from the end of one chunk starts the next to maintain context, and document structure all affect retrieval accuracy. Smaller chunks improve precision but lose context, while larger chunks preserve context but add noise.

Embedding Generation

Each chunk is converted into a vector using an embedding model. Embedding prompts and documents in RAG means transforming both the user’s query (prompt) and knowledge base documents into a comparable format for relevance.

The embedding model choice affects semantic recall and system latency. Higher-quality embeddings improve retrieval relevance but increase computational cost.

Retrieval Layer

At query time, the user’s input is embedded and matched against stored vectors. The top-k most relevant chunks are retrieved based on similarity. However, a higher k does not always yield better results, retrieving too much context can overwhelm the LLM and produce unclear results.

Prompt Construction and Generation

An augmented prompt merges the original user query with relevant retrieved text chunks to form a structured context. Prompt structure is essential for grounding the output. Poor formatting or unclear instructions can cause the model to ignore the retrieved context. The final synthesized response is then delivered to the user.

What are the benefits of RAG architecture?

Retrieval Augmented Generation (RAG) enhances LLM performance by combining generation with real-time data retrieval, making systems more practical and reliable. Here are some benefits of RAG architecture:

- Accuracy & Reliability: By grounding responses in verified external sources, RAG significantly reduces hallucinations and improves the factual correctness of outputs.

- Up-to-date Knowledge: RAG enables access to real-time or frequently updated data, eliminating the need for constant retraining of models.

- Data Security: It allows organizations to use proprietary or sensitive data securely, since the data remains external and is not embedded into the model.

- Cost-Effective: Compared to fine-tuning or training models, RAG is more efficient and scalable, reducing both computational costs and maintenance effort.

What are the common RAG design mistakes?

Even well-designed RAG architecture can underperform due to subtle but critical design choices. Avoiding these common mistakes is key to maintaining accuracy and reliability in production. Here, have a look:

Treating RAG as a One-Time Setup

RAG is not static. As data and user behavior evolve, retrieval quality can silently degrade. Without continuous evaluation and re-indexing, systems may still run but produce outdated or irrelevant responses.

Using Default Chunk Sizes

Default chunking rarely fits real data. Small chunks improve precision but lose context, while large chunks add noise. Chunk size should be tuned based on actual queries.

Over-Retrieving Context

More context isn’t always better. Too many documents can overwhelm the model, leading to unfocused or inaccurate answers. Balanced retrieval is key.

What is the difference between Retrieval-Augmented Generation and semantic search?

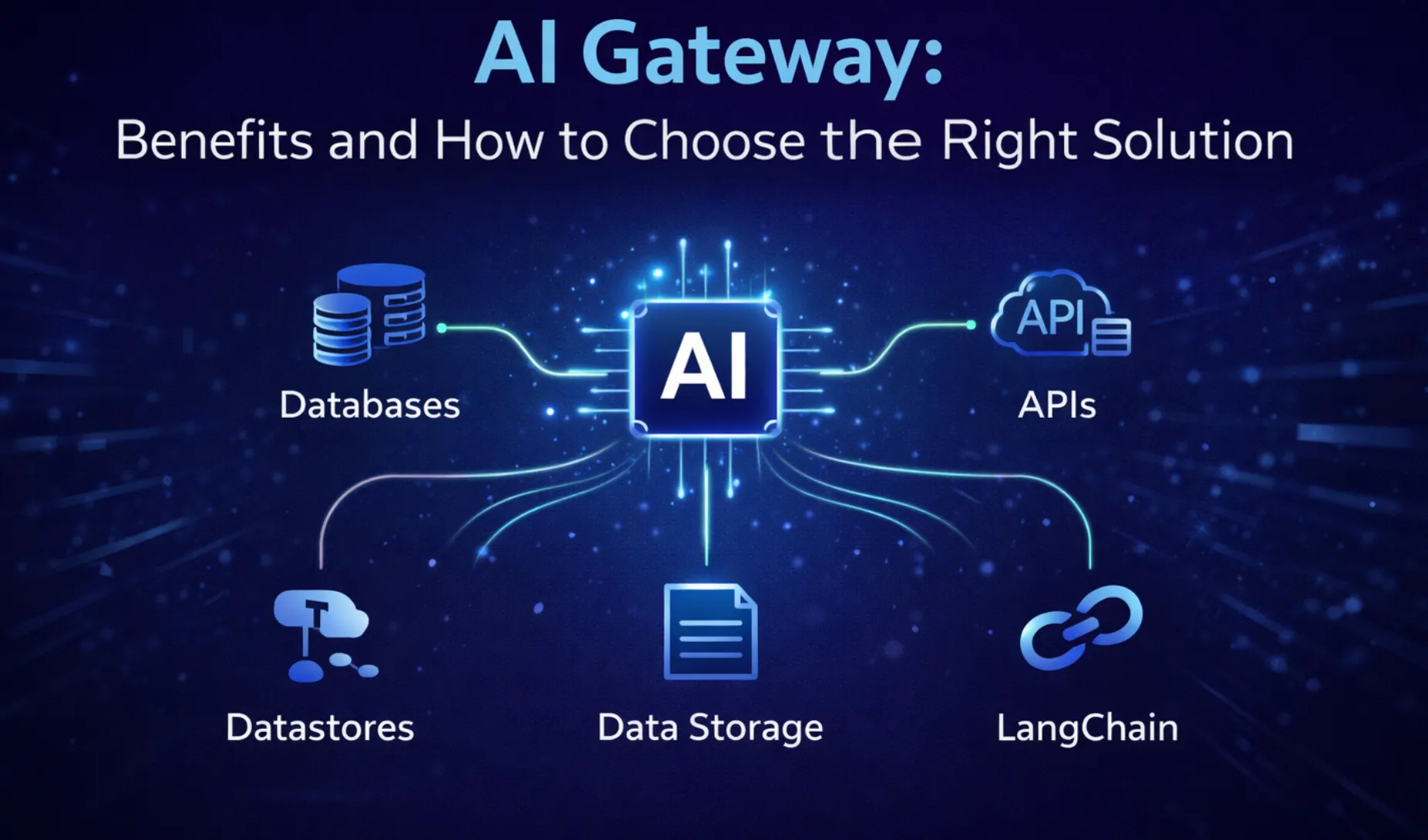

Semantic search focuses on accurately retrieving relevant information from large and diverse data sources. Enterprises often store massive volumes of content, manuals, FAQs, reports, and internal documents ,across multiple systems, making retrieval difficult at scale.

Semantic search solves this by understanding intent and meaning, not just keywords. It can locate precise passages that answer a query, even if the wording differs. This improves context retrieval and reduces the effort required to prepare and structure data, as it handles relevance ranking and knowledge extraction efficiently.

On the other hand, RAG builds on semantic search by adding a generation layer. After retrieving the most relevant context, it feeds that information into an LLM to generate a clear, structured response.

Instead of returning raw passages, RAG transforms retrieved knowledge into a direct answer. This is especially useful in applications like support bots or internal assistants, where users expect concise, ready-to-use responses rather than multiple document results.

Putting it simply, semantic search improves how systems find relevant information across large datasets, while RAG ensures that this information is used effectively by generating accurate, context-aware answers. In practice, semantic search often acts as a core component within a RAG pipeline.

What are the real world trade-offs in RAG architecture?

No RAG architecture optimizes all metrics simultaneously. Each design decision involves balancing competing priorities.

Accuracy vs Latency

Improving answer accuracy often requires deeper retrieval, longer prompts, and higher quality embeddings, which increase latency. In user-facing applications, even small delays significantly impact user experience. Therefore, it is better to decide early whether the system prioritizes correctness or responsiveness, and tune retrieval accordingly.

Cost vs Retrieval Quality

High quality embeddings and frequent re-indexing improve retrieval relevance but raise operational costs. For large document collections, these costs scale rapidly. Many teams adopt hybrid approaches, using high quality embeddings for critical documents and relaxing constraints elsewhere.

Simplicity vs Control

End-to-end RAG frameworks simplify development but often conceal key tuning parameters. Custom pipelines provide more control but increase engineering complexity. The right balance depends on team maturity and long-term maintenance expectations.

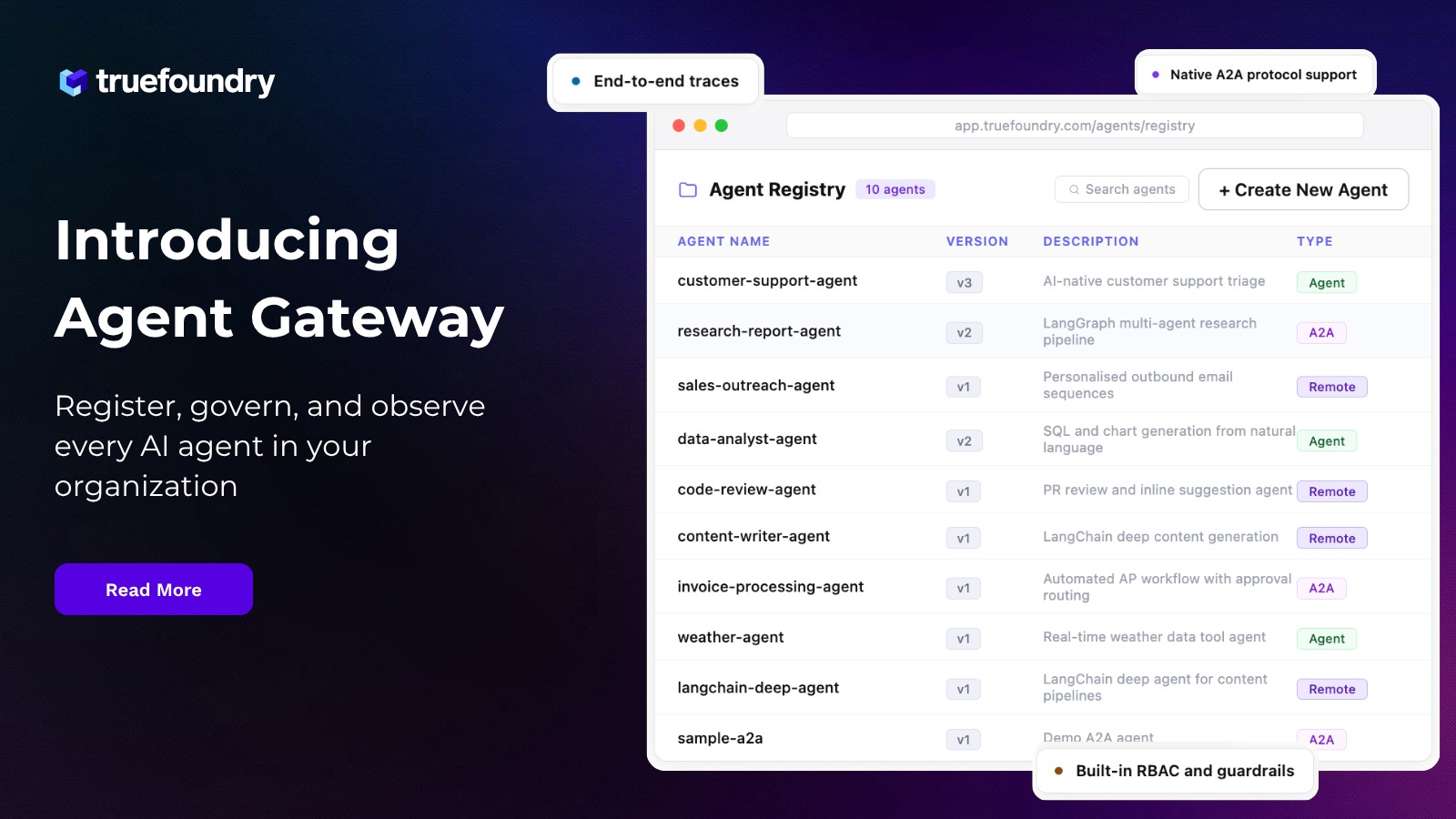

These trade-offs matter because RAG architecture failures rarely stem from a single broken component, especially when deployed behind an AI gateway. They arise from subtle architectural decisions interacting over time. Teams that acknowledge these trade-offs build systems easier to debug, adapt, and trust.

When RAG is (and isn’t) the right choice?

Choosing Retrieval-Augmented Generation (RAG) depends on the type of problem you’re solving and the nature of your data.

When RAG is a good choice

RAG architecture works best when applications require accurate, up-to-date, and context-specific information. It is ideal for use cases like support bots, internal assistants, or knowledge retrieval systems that rely on large and frequently changing document sets.

It’s especially useful when:

- Data is dynamic or frequently updated

- Information is spread across multiple sources

- Responses must be grounded in reliable, external content

When RAG is not the right choice

RAG architecture may not be necessary for tasks that rely on general knowledge or simple reasoning. For example, basic chat, creative writing, or straightforward math problems can be handled directly by an LLM without retrieval.

It’s less suitable when:

- The knowledge is static and well-covered by the model

- Low latency is critical and retrieval adds overhead

- High-quality structured APIs can directly provide answers

In short, use RAG when you need fresh, verifiable knowledge, and avoid it when the model alone is sufficient.

Conclusion

RAG is not a feature you toggle on, it is a system whose performance relies on thoughtful architectural choices. Teams that treat retrieval, embeddings, and prompt design as core components build more reliable LLM applications.

A well-designed RAG architecture transforms large language models into dependable production systems.

Frequently Asked Questions

What is RAG architecture?

Retrieval Augmented Generation (RAG) architecture combines information retrieval with language generation. It retrieves relevant data from external sources and feeds it to an LLM to generate accurate, context-aware responses. This approach improves reliability, reduces hallucinations, and enables AI systems to use up-to-date and domain-specific knowledge effectively.

What are the 4 levels of RAG?

The four levels of RAG typically include basic retrieval, reranking, context optimization, and advanced orchestration. Systems evolve from simple document lookup to refined pipelines with chunking, ranking, caching, and feedback loops. Higher levels focus on improving relevance, latency, and response quality for production-grade, real-world LLM applications.

What are some real-world examples of RAG architecture?

RAG is used in support bots, internal knowledge assistants, and enterprise search systems. Examples include customer service chatbots retrieving FAQs, healthcare assistants accessing medical guidelines, and finance tools analyzing reports. It also powers developer copilots and document Q&A systems where accurate, context-grounded responses are essential.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

%20(28).webp)

.webp)

.webp)